How to Scrape Wikipedia Tables into CSV with Python

Wikipedia is full of structured data hiding in plain sight. Government stats, sports records, election results, company lists, population tables — a huge amount of it lives inside regular HTML tables.

The good news: many Wikipedia pages can be scraped with plain requests, BeautifulSoup, and pandas.read_html() in just a few lines.

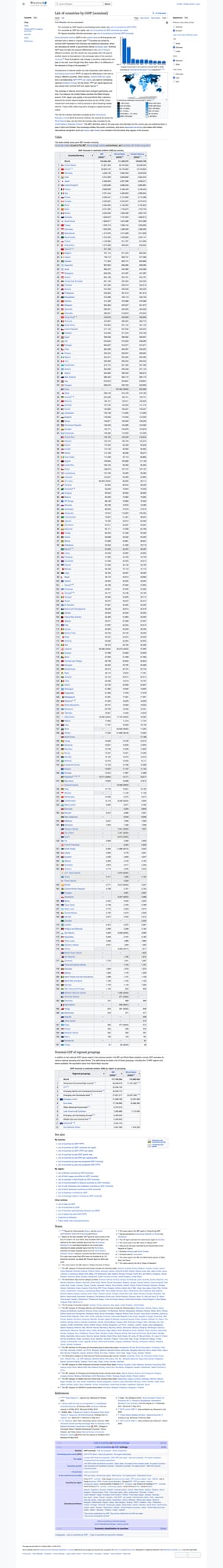

In this tutorial, we’ll scrape the tables from this page:

https://en.wikipedia.org/wiki/List_of_countries_by_GDP_(nominal)

Then we’ll export the clean result to CSV.

This is one of the fastest ways to turn a public HTML page into a real dataset you can analyze.

Wikipedia is a friendly starting point. When you graduate to collecting tables from many public sites, ProxiesAPI gives you one fetch interface while your parsing workflow stays the same.

Why Wikipedia is a great scraping target

Wikipedia is ideal for data extraction because:

- many pages are server-rendered HTML

- tables often use the predictable

wikitableclass - links and citations are visible in the raw markup

- pages are usually stable enough to support reproducible scripts

For quick projects, you can often skip browser automation entirely.

Setup

Install the libraries:

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml pandas html5lib

Why both lxml and html5lib?

lxmlis fast and works well for general parsingpandas.read_html()may uselxmlorhtml5libunder the hood depending on the table structure

Step 1: Fetch the page HTML

Start by downloading the page once and checking that the content is real HTML, not a blocked or partial response.

import requests

URL = "https://en.wikipedia.org/wiki/List_of_countries_by_GDP_(nominal)"

HEADERS = {

"User-Agent": "Mozilla/5.0 (compatible; table-scraper/1.0; +https://example.com/bot)"

}

def fetch_html(url: str) -> str:

response = requests.get(url, headers=HEADERS, timeout=(10, 30))

response.raise_for_status()

return response.text

html = fetch_html(URL)

print("downloaded characters:", len(html))

print(html[:200])

Example terminal output

downloaded characters: 458327

<!DOCTYPE html>

<html class="client-nojs vector-feature-language-in-header-enabled ...">

Step 2: Inspect the tables on the page

Wikipedia pages often contain multiple tables. Before exporting anything, count them and inspect their headers.

from bs4 import BeautifulSoup

def list_tables(html: str) -> None:

soup = BeautifulSoup(html, "lxml")

tables = soup.select("table.wikitable")

print("wikitable count:", len(tables))

for i, table in enumerate(tables[:5], start=1):

headers = [th.get_text(" ", strip=True) for th in table.select("tr th")[:10]]

print(f"table {i} headers:", headers)

list_tables(html)

Example output

wikitable count: 1

table 1 headers: ['Country/Territory', 'IMF[1][13]', 'World Bank[14]', 'United Nations[15]']

That tells us exactly which table we want.

Step 3: Parse the table with pandas

For Wikipedia tables, pandas.read_html() is usually the fastest route from HTML to DataFrame.

import pandas as pd

def parse_tables(html: str) -> list[pd.DataFrame]:

return pd.read_html(html)

tables = parse_tables(html)

print("total tables found:", len(tables))

print(tables[0].head())

Sample output

total tables found: 7

Country/Territory IMF[1][13] World Bank[14] United Nations[15]

0 World 110047109 105435540 100834796

1 United States 29167779 27360935 27360935

2 China 18273357 17794782 17794782

Because read_html() returns every table it finds, the job is not “parse HTML manually.” The job is “choose the right table and clean it.”

Step 4: Select the correct DataFrame

A good pattern is to search by expected column names instead of relying on table index only.

def choose_gdp_table(tables: list[pd.DataFrame]) -> pd.DataFrame:

for df in tables:

columns = [str(col) for col in df.columns]

if any("Country" in col for col in columns) and any("IMF" in col for col in columns):

return df

raise ValueError("Could not find GDP table")

gdp_df = choose_gdp_table(tables)

print(gdp_df.head(10))

That makes the script more robust if Wikipedia adds or reorders side tables later.

Step 5: Clean the columns

Wikipedia column names often contain citation markers or multi-level headers. Let’s normalize them into something easier to work with.

def normalize_columns(df: pd.DataFrame) -> pd.DataFrame:

clean = df.copy()

clean.columns = [

str(col).replace("[", " [").strip().replace(" ", " ")

for col in clean.columns

]

clean.columns = [

col.split("[")[0].strip().lower().replace("/", "_").replace(" ", "_")

for col in clean.columns

]

return clean

gdp_df = normalize_columns(gdp_df)

print(gdp_df.columns.tolist())

Example output

['country_territory', 'imf', 'world_bank', 'united_nations']

Now the data is much easier to reference from code.

Step 6: Remove citations and blank rows

Some pages have citation artifacts, duplicated header rows, or footnote-only values. Here’s a practical cleanup pass.

def clean_table(df: pd.DataFrame) -> pd.DataFrame:

clean = df.copy()

# Drop rows that are entirely empty

clean = clean.dropna(how="all")

# Remove repeated header rows if they slipped into the body

clean = clean[clean["country_territory"] != "Country/Territory"]

# Strip whitespace from text columns

for col in clean.columns:

if clean[col].dtype == object:

clean[col] = clean[col].astype(str).str.strip()

return clean.reset_index(drop=True)

gdp_df = clean_table(gdp_df)

print(gdp_df.head())

Step 7: Save the result to CSV

def save_csv(df: pd.DataFrame, path: str) -> None:

df.to_csv(path, index=False, encoding="utf-8")

save_csv(gdp_df, "wikipedia_gdp_nominal.csv")

print("saved rows:", len(gdp_df))

Output

saved rows: 210

Now you have a clean CSV you can open in Excel or load directly into pandas for analysis.

Full working script

import requests

import pandas as pd

from bs4 import BeautifulSoup

URL = "https://en.wikipedia.org/wiki/List_of_countries_by_GDP_(nominal)"

HEADERS = {

"User-Agent": "Mozilla/5.0 (compatible; wikipedia-table-scraper/1.0)"

}

def fetch_html(url: str) -> str:

response = requests.get(url, headers=HEADERS, timeout=(10, 30))

response.raise_for_status()

return response.text

def list_tables(html: str) -> None:

soup = BeautifulSoup(html, "lxml")

tables = soup.select("table.wikitable")

print("wikitable count:", len(tables))

def parse_tables(html: str) -> list[pd.DataFrame]:

return pd.read_html(html)

def choose_target_table(tables: list[pd.DataFrame]) -> pd.DataFrame:

for df in tables:

cols = [str(col) for col in df.columns]

if any("Country" in c for c in cols) and any("IMF" in c for c in cols):

return df

raise ValueError("Target table not found")

def normalize_columns(df: pd.DataFrame) -> pd.DataFrame:

clean = df.copy()

clean.columns = [

str(col).split("[")[0].strip().lower().replace("/", "_").replace(" ", "_")

for col in clean.columns

]

return clean

def clean_table(df: pd.DataFrame) -> pd.DataFrame:

clean = df.copy().dropna(how="all")

clean = clean[clean["country_territory"] != "Country/Territory"]

for col in clean.columns:

if clean[col].dtype == object:

clean[col] = clean[col].astype(str).str.strip()

return clean.reset_index(drop=True)

def save_csv(df: pd.DataFrame, path: str) -> None:

df.to_csv(path, index=False, encoding="utf-8")

if __name__ == "__main__":

html = fetch_html(URL)

list_tables(html)

tables = parse_tables(html)

df = choose_target_table(tables)

df = normalize_columns(df)

df = clean_table(df)

save_csv(df, "wikipedia_gdp_nominal.csv")

print(df.head())

print(f"saved {len(df)} rows")

When BeautifulSoup is the better choice

pandas.read_html() is fast, but it isn’t always enough.

Use BeautifulSoup directly when you need to:

- extract links from cells

- keep footnotes or metadata

- handle nested tables manually

- target one specific table by section heading

- clean weird row spans and col spans yourself

For example, to grab the first wikitable manually:

soup = BeautifulSoup(html, "lxml")

first_table = soup.select_one("table.wikitable")

print(first_table.get_text("

", strip=True)[:500])

That’s especially helpful when you want both the displayed text and the underlying link URL.

Using ProxiesAPI for the fetch layer

Wikipedia itself usually does not need a proxy service for light workloads. But if you’re building a workflow that scrapes many public sites with the same code pattern, it helps to standardize the network layer.

The ProxiesAPI request format is:

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://en.wikipedia.org/wiki/List_of_countries_by_GDP_(nominal)"

Python version:

from urllib.parse import quote_plus

import requests

def fetch_via_proxiesapi(target_url: str, api_key: str) -> str:

proxy_url = (

"http://api.proxiesapi.com/?key="

f"{api_key}&url={quote_plus(target_url)}"

)

response = requests.get(proxy_url, timeout=(10, 30))

response.raise_for_status()

return response.text

Again, the parser code does not change. Only the fetch function changes.

Final checklist

Before shipping a Wikipedia table scraper into production, verify:

- you identified the correct table instead of assuming

tables[0] - your column names are normalized

- blank rows are removed

- numeric columns are cleaned if you want analysis-ready values

- the output CSV opens cleanly in UTF-8

That gives you a small, reliable script you can reuse across hundreds of Wikipedia data pages.

Wikipedia is a friendly starting point. When you graduate to collecting tables from many public sites, ProxiesAPI gives you one fetch interface while your parsing workflow stays the same.

Wikipedia is a friendly starting point. When you graduate to collecting tables from many public sites, ProxiesAPI gives you one fetch interface while your parsing workflow stays the same.