How to Scrape Booking.com Hotel Prices with Python (Using ProxiesAPI)

Booking.com is a classic “high-value” scraping target: hotel names, nightly prices, and review scores are exactly the inputs you need for deal alerts, market research, or competitive pricing.

The catch: Booking pages are dynamic and can be fragile if you guess selectors or hammer requests.

In this guide we’ll build a real Python scraper that:

- fetches a Booking.com search results URL via ProxiesAPI

- parses hotel cards (name, price, score, location blurb)

- exports clean JSON

- includes a selector-debug workflow so you can fix it quickly when Booking changes markup

When you scrape at scale, failures are usually network-level: rate limits, transient blocks, and inconsistent responses. ProxiesAPI gives you a simple proxy-backed fetch URL so your scraper keeps moving without you building proxy plumbing.

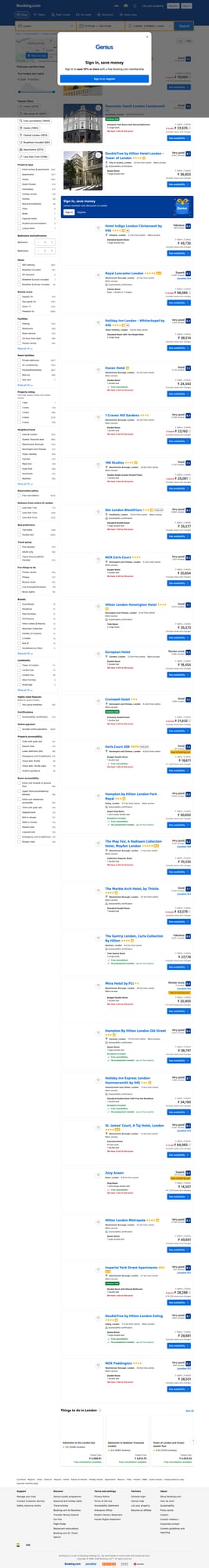

What we’re scraping (page shape)

We’ll scrape a Booking.com search results page, like:

https://www.booking.com/searchresults.html?ss=London&checkin=2026-04-10&checkout=2026-04-12&group_adults=2&no_rooms=1&group_children=0

On this page, hotels appear as repeating “property cards”. Booking’s HTML changes often, so the key is:

- Fetch HTML reliably (timeouts + retries)

- Use multiple selectors + fallbacks

- Validate output by spot-checking 5–10 items

Important: Booking may localize currency and content, and may show different prices to different users. Treat scraped prices as observations, not ground truth.

Setup

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml

We’ll use:

requestsfor HTTPBeautifulSoup(lxml)for parsing

Step 1: Fetch HTML using ProxiesAPI

ProxiesAPI’s core integration is simple: you call a single URL that fetches the target for you.

API_KEY="YOUR_PROXIESAPI_KEY"

TARGET="https://www.booking.com/searchresults.html?ss=London&checkin=2026-04-10&checkout=2026-04-12&group_adults=2&no_rooms=1&group_children=0"

curl -s "http://api.proxiesapi.com/?key=$API_KEY&url=$TARGET" | head -n 20

In Python, we’ll wrap this into a fetch_html() function with:

- a real timeout

- basic retries

- a desktop User-Agent (helps avoid “broken” responses)

import time

import urllib.parse

import requests

API_KEY = "YOUR_PROXIESAPI_KEY"

TIMEOUT = (10, 60) # connect, read

session = requests.Session()

session.headers.update({

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/123.0 Safari/537.36",

"Accept-Language": "en-US,en;q=0.9",

})

def proxiesapi_url(target_url: str) -> str:

return "http://api.proxiesapi.com/?" + urllib.parse.urlencode({

"key": API_KEY,

"url": target_url,

})

def fetch_html(target_url: str, retries: int = 3, backoff: float = 2.0) -> str:

url = proxiesapi_url(target_url)

last_err = None

for attempt in range(1, retries + 1):

try:

r = session.get(url, timeout=TIMEOUT)

r.raise_for_status()

# Quick sanity check: Booking pages are large; tiny HTML is often a block/consent.

if len(r.text) < 20_000:

raise RuntimeError(f"Suspiciously small response: {len(r.text)} bytes")

return r.text

except Exception as e:

last_err = e

sleep_s = backoff ** attempt

print(f"attempt {attempt}/{retries} failed: {e} -> sleeping {sleep_s:.1f}s")

time.sleep(sleep_s)

raise RuntimeError(f"Failed after {retries} retries: {last_err}")

Step 2: Inspect the HTML and choose robust selectors

Booking markup evolves, so instead of relying on one brittle class name, we’ll try multiple patterns.

At the time of writing, Booking uses “property card” containers with data attributes like:

data-testid="property-card"

Try this local inspection:

from bs4 import BeautifulSoup

html = fetch_html("https://www.booking.com/searchresults.html?ss=London&checkin=2026-04-10&checkout=2026-04-12&group_adults=2&no_rooms=1&group_children=0")

soup = BeautifulSoup(html, "lxml")

cards = soup.select('[data-testid="property-card"]')

print("cards:", len(cards))

print(cards[0].get_text(" ", strip=True)[:300])

If cards is 0, don’t guess randomly—search the HTML for something you see on the page (a hotel name, or “Property”/“Review score”).

Step 3: Parse hotel cards into structured data

We’ll extract:

- name

- price per night (as text)

- review score (as text)

- short location / distance blurb (if present)

- booking link (relative → absolute)

import re

from bs4 import BeautifulSoup

BASE = "https://www.booking.com"

def clean_text(x: str | None) -> str | None:

if not x:

return None

x = re.sub(r"\s+", " ", x).strip()

return x or None

def parse_cards(html: str) -> list[dict]:

soup = BeautifulSoup(html, "lxml")

out = []

cards = soup.select('[data-testid="property-card"]')

for c in cards:

# Name

name_el = (

c.select_one('[data-testid="title"]')

or c.select_one('[data-testid="property-card-title"]')

or c.select_one("h3")

)

name = clean_text(name_el.get_text(" ", strip=True) if name_el else None)

# Price text: Booking can show “Price for 2 nights” etc; we keep as raw text.

price_el = (

c.select_one('[data-testid="price-and-discounted-price"]')

or c.select_one('[data-testid="price"]')

)

price = clean_text(price_el.get_text(" ", strip=True) if price_el else None)

# Review score

score_el = (

c.select_one('[data-testid="review-score"]')

or c.select_one('[data-testid="review-score"] div')

)

score = clean_text(score_el.get_text(" ", strip=True) if score_el else None)

# Location/distance snippet

loc_el = (

c.select_one('[data-testid="location"]')

or c.select_one('[data-testid="address"]')

)

location = clean_text(loc_el.get_text(" ", strip=True) if loc_el else None)

# Link

a = c.select_one('a[href*="/hotel/"]') or c.select_one("a")

href = a.get("href") if a else None

if href and href.startswith("/"):

href = BASE + href.split("?")[0]

# Skip obviously empty rows

if not name and not price and not href:

continue

out.append({

"name": name,

"price_text": price,

"review_score_text": score,

"location_text": location,

"url": href,

})

return out

Terminal-style run

if __name__ == "__main__":

target = "https://www.booking.com/searchresults.html?ss=London&checkin=2026-04-10&checkout=2026-04-12&group_adults=2&no_rooms=1&group_children=0"

html = fetch_html(target)

hotels = parse_cards(html)

print("hotels:", len(hotels))

for h in hotels[:3]:

print(h)

Typical output will look like:

hotels: 25

{'name': '...', 'price_text': '₹ ...', 'review_score_text': 'Scored ...', 'location_text': '...', 'url': 'https://www.booking.com/hotel/...'}

...

Export to JSON

import json

with open("booking_hotels.json", "w", encoding="utf-8") as f:

json.dump(hotels, f, ensure_ascii=False, indent=2)

print("wrote booking_hotels.json", len(hotels))

Common failure modes (and how to debug fast)

1) You get a tiny HTML page

That’s usually a consent page, block page, or error response.

Fixes:

- verify you’re using realistic headers (

User-Agent,Accept-Language) - add backoff between runs

- rotate requests across time (don’t do 200 requests in 10 seconds)

- use ProxiesAPI for more reliable fetching across runs

2) Selectors return 0 cards

Do not rewrite everything. Instead:

- save the HTML to disk

- search for a known hotel name from the page

- update one selector at a time

with open("debug.html", "w", encoding="utf-8") as f:

f.write(html)

print("saved debug.html")

Then open debug.html in your browser, inspect a card, and adjust selectors.

Where ProxiesAPI fits (honestly)

Booking.com is a site where reliability matters. You might be scraping multiple cities, dates, and occupancies.

ProxiesAPI helps you keep the “fetch layer” simple:

- you call one URL

- you keep your parsing code unchanged

- you can add retries/backoff without managing proxy pools

QA checklist

-

cards > 0on your target query - Spot-check 5 hotels: do name and price match what you see on the page?

- URLs resolve to real hotel pages

- Your script uses timeouts and retries

- You export clean JSON

When you scrape at scale, failures are usually network-level: rate limits, transient blocks, and inconsistent responses. ProxiesAPI gives you a simple proxy-backed fetch URL so your scraper keeps moving without you building proxy plumbing.