How to Scrape Trustpilot Reviews for Any Company

Trustpilot is one of the fastest ways to understand how customers actually feel about a company. If you can collect review text, star ratings, and dates into a CSV, you can do useful things immediately:

- monitor reputation shifts over time

- compare competitors side by side

- spot recurring complaints before they become obvious in support tickets

- feed clean review text into downstream NLP workflows

In this guide, we’ll build a real Python scraper for a Trustpilot company page, extract reviewer name, rating, date, and review text, then export everything to CSV.

We’ll use the TripAdvisor Trustpilot page as the example target:

https://www.trustpilot.com/review/www.tripadvisor.com

If you need to collect review pages across many companies, ProxiesAPI gives you one stable fetch layer so your scraper code stays simple while request volume grows.

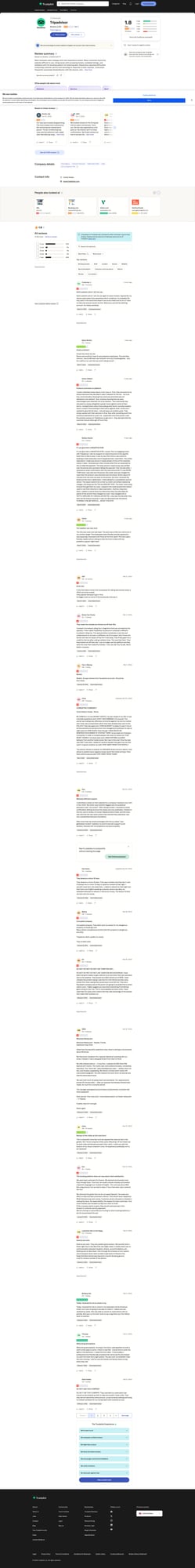

What the Trustpilot page looks like

At the time of writing, the review cards on the page are server-rendered enough for a straightforward requests + BeautifulSoup workflow.

Useful patterns from the HTML:

- each visible review card is an

article[data-service-review-card-paper="true"] - reviewer name appears inside

[data-consumer-name-typography] - review date appears in

time[data-service-review-date-time-ago="true"] - rating is stored in the

alttext of the stars image, for exampleRated 2 out of 5 stars - review text appears in either

[data-service-review-text-typography]or[data-relevant-review-text-typography]

That means we can scrape it without guessing random CSS class hashes.

Setup

Create a virtual environment and install the parser libraries:

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml

We’ll keep the scraper dependency-light:

requestsfor fetchingBeautifulSoupfor parsingcsvfrom the Python standard library for export

Step 1: Fetch the page

Start with a clean fetch helper that uses headers and timeouts.

import requests

HEADERS = {

"User-Agent": (

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/123.0.0.0 Safari/537.36"

)

}

TIMEOUT = (10, 30)

def fetch_html(url: str) -> str:

response = requests.get(url, headers=HEADERS, timeout=TIMEOUT)

response.raise_for_status()

return response.text

url = "https://www.trustpilot.com/review/www.tripadvisor.com"

html = fetch_html(url)

print("downloaded characters:", len(html))

print(html[:180])

Example terminal output

downloaded characters: 831246

<!DOCTYPE html><html lang="en-US"><head><meta charSet="UTF-8"/><meta name="viewport" content="width=device-width, initial-scale=1"/>

Step 2: Parse one review card correctly

Here is a simplified version of the structure we care about:

<article data-service-review-card-paper="true">

<span data-consumer-name-typography="true">Gaclly Jaly</span>

<time data-service-review-date-time-ago="true" datetime="2025-10-14T00:40:27.000Z">Oct 14, 2025</time>

<img alt="Rated 2 out of 5 stars" class="CDS_StarRating_starRating__..." />

<p data-relevant-review-text-typography="true">Our stay was honestly disappointing...</p>

</article>

The most important detail is the rating. Trustpilot exposes it in the image alt text, so we can parse the number out safely.

Step 3: Build the parser

import re

from bs4 import BeautifulSoup

def parse_rating(text: str) -> int | None:

match = re.search(r"Rated\s+(\d+)\s+out of\s+5", text or "")

return int(match.group(1)) if match else None

def parse_reviews(html: str) -> list[dict]:

soup = BeautifulSoup(html, "lxml")

rows = []

for card in soup.select('article[data-service-review-card-paper="true"]'):

name_el = card.select_one('[data-consumer-name-typography="true"]')

date_el = card.select_one('time[data-service-review-date-time-ago="true"]')

rating_el = card.select_one('img[alt*="Rated"]')

text_el = (

card.select_one('[data-service-review-text-typography="true"]')

or card.select_one('[data-relevant-review-text-typography="true"]')

)

title_el = card.select_one('[data-service-review-title-typography="true"]')

review = {

"reviewer_name": name_el.get_text(strip=True) if name_el else None,

"review_date": date_el.get_text(" ", strip=True) if date_el else None,

"review_datetime": date_el.get("datetime") if date_el else None,

"rating": parse_rating(rating_el.get("alt", "") if rating_el else ""),

"review_title": title_el.get_text(" ", strip=True) if title_el else None,

"review_text": text_el.get_text(" ", strip=True) if text_el else None,

}

if review["reviewer_name"] and review["review_text"]:

rows.append(review)

return rows

reviews = parse_reviews(html)

print("reviews found:", len(reviews))

print(reviews[0])

Example output

reviews found: 20

{'reviewer_name': 'Gaclly Jaly', 'review_date': 'Oct 14, 2025', 'review_datetime': '2025-10-14T00:40:27.000Z', 'rating': 2, 'review_title': None, 'review_text': 'Our stay was honestly disappointing. The room looked nice in photos but felt old and poorly maintained in person. The air conditioning was noisy and the bathroom had a slight odor that didnt go away.... See more'}

If you only want the main text and not the “See more” suffix, you can clean it after extraction.

Step 4: Handle pagination

Trustpilot company reviews can span multiple pages. The simplest production pattern is:

- fetch page 1

- parse the reviews

- follow the “next page” link if present

- stop after a limit you control

Here’s a practical crawler:

from urllib.parse import urljoin

BASE_URL = "https://www.trustpilot.com"

def find_next_page(html: str, current_url: str) -> str | None:

soup = BeautifulSoup(html, "lxml")

next_link = soup.select_one('a[name="pagination-button-next"]')

if next_link and next_link.get("href"):

return urljoin(BASE_URL, next_link["href"])

# fallback: explicit rel if Trustpilot changes the markup

next_link = soup.select_one('a[rel="next"]')

if next_link and next_link.get("href"):

return urljoin(BASE_URL, next_link["href"])

return None

def crawl_reviews(start_url: str, max_pages: int = 3) -> list[dict]:

all_reviews = []

seen = set()

url = start_url

for page_num in range(1, max_pages + 1):

html = fetch_html(url)

batch = parse_reviews(html)

for row in batch:

key = (row["reviewer_name"], row["review_datetime"], row["review_text"])

if key in seen:

continue

seen.add(key)

all_reviews.append(row)

print(f"page {page_num}: {len(batch)} reviews | total unique: {len(all_reviews)}")

next_url = find_next_page(html, url)

if not next_url:

break

url = next_url

return all_reviews

reviews = crawl_reviews("https://www.trustpilot.com/review/www.tripadvisor.com", max_pages=3)

print("final total:", len(reviews))

Example terminal output

page 1: 20 reviews | total unique: 20

page 2: 20 reviews | total unique: 40

page 3: 20 reviews | total unique: 60

final total: 60

Step 5: Export to CSV

Now let’s write the data to a file you can open in Excel, Numbers, or pandas.

import csv

def save_csv(rows: list[dict], path: str) -> None:

if not rows:

return

with open(path, "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

save_csv(reviews, "trustpilot_reviews_tripadvisor.csv")

print("saved", len(reviews), "rows to trustpilot_reviews_tripadvisor.csv")

Output

saved 60 rows to trustpilot_reviews_tripadvisor.csv

A sample CSV row looks like this:

reviewer_name,review_date,review_datetime,rating,review_title,review_text

Gaclly Jaly,"Oct 14, 2025",2025-10-14T00:40:27.000Z,2,,"Our stay was honestly disappointing. The room looked nice in photos but felt old and poorly maintained in person..."

Full working script

This version is ready to run as-is.

import csv

import re

from urllib.parse import urljoin

import requests

from bs4 import BeautifulSoup

HEADERS = {

"User-Agent": (

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/123.0.0.0 Safari/537.36"

)

}

TIMEOUT = (10, 30)

BASE_URL = "https://www.trustpilot.com"

def fetch_html(url: str) -> str:

response = requests.get(url, headers=HEADERS, timeout=TIMEOUT)

response.raise_for_status()

return response.text

def parse_rating(text: str) -> int | None:

match = re.search(r"Rated\s+(\d+)\s+out of\s+5", text or "")

return int(match.group(1)) if match else None

def parse_reviews(html: str) -> list[dict]:

soup = BeautifulSoup(html, "lxml")

rows = []

for card in soup.select('article[data-service-review-card-paper="true"]'):

name_el = card.select_one('[data-consumer-name-typography="true"]')

date_el = card.select_one('time[data-service-review-date-time-ago="true"]')

rating_el = card.select_one('img[alt*="Rated"]')

text_el = (

card.select_one('[data-service-review-text-typography="true"]')

or card.select_one('[data-relevant-review-text-typography="true"]')

)

title_el = card.select_one('[data-service-review-title-typography="true"]')

row = {

"reviewer_name": name_el.get_text(strip=True) if name_el else None,

"review_date": date_el.get_text(" ", strip=True) if date_el else None,

"review_datetime": date_el.get("datetime") if date_el else None,

"rating": parse_rating(rating_el.get("alt", "") if rating_el else ""),

"review_title": title_el.get_text(" ", strip=True) if title_el else None,

"review_text": text_el.get_text(" ", strip=True) if text_el else None,

}

if row["reviewer_name"] and row["review_text"]:

rows.append(row)

return rows

def find_next_page(html: str) -> str | None:

soup = BeautifulSoup(html, "lxml")

next_link = soup.select_one('a[name="pagination-button-next"]') or soup.select_one('a[rel="next"]')

if next_link and next_link.get("href"):

return urljoin(BASE_URL, next_link["href"])

return None

def crawl_reviews(start_url: str, max_pages: int = 3) -> list[dict]:

all_rows = []

seen = set()

url = start_url

for _ in range(max_pages):

html = fetch_html(url)

batch = parse_reviews(html)

for row in batch:

key = (row["reviewer_name"], row["review_datetime"], row["review_text"])

if key in seen:

continue

seen.add(key)

all_rows.append(row)

next_url = find_next_page(html)

if not next_url:

break

url = next_url

return all_rows

def save_csv(rows: list[dict], path: str) -> None:

if not rows:

return

with open(path, "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

if __name__ == "__main__":

start_url = "https://www.trustpilot.com/review/www.tripadvisor.com"

rows = crawl_reviews(start_url, max_pages=3)

save_csv(rows, "trustpilot_reviews_tripadvisor.csv")

print(f"saved {len(rows)} reviews")

Where ProxiesAPI fits

If you’re only scraping one company page occasionally, direct requests may be enough.

But if you want to scrape hundreds or thousands of company profiles on a schedule, it helps to move the networking layer behind a single API endpoint.

With ProxiesAPI, the request shape is:

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://www.trustpilot.com/review/www.tripadvisor.com"

And in Python:

import requests

from urllib.parse import quote_plus

def fetch_via_proxiesapi(target_url: str, api_key: str) -> str:

proxy_url = (

"http://api.proxiesapi.com/?key="

f"{api_key}&url={quote_plus(target_url)}"

)

response = requests.get(proxy_url, timeout=(10, 30))

response.raise_for_status()

return response.text

The rest of your parser stays the same. That’s the important design principle: keep parsing separate from fetching.

Practical cleanup tips

Before you feed Trustpilot data into dashboards or analysis, it’s worth doing three cleanups:

- Trim trailing UI text like

See more - Normalize dates into ISO format using the

datetimeattribute - Deduplicate by name + timestamp + text so pagination restarts don’t duplicate rows

Example cleanup:

def clean_review_text(text: str | None) -> str | None:

if not text:

return text

return text.removesuffix(" See more").strip()

Final checklist

Before you run this in production, verify:

- the selectors still match the live HTML

- your scraper respects robots, rate limits, and site terms

- retries are added around transient failures

- you store raw HTML samples for debugging selector breakage

- CSV exports are UTF-8 encoded

If you do that, you’ll have a scraper that is small, understandable, and easy to repair when the page changes.

If you need to collect review pages across many companies, ProxiesAPI gives you one stable fetch layer so your scraper code stays simple while request volume grows.

If you need to collect review pages across many companies, ProxiesAPI gives you one stable fetch layer so your scraper code stays simple while request volume grows.