Scrape Reddit Forum Data with Python: Posts, Comments, and Pagination

Reddit is one of the best “real-world” scraping playgrounds because you’ll hit the exact problems you see everywhere:

- pagination

- inconsistent markup (especially on modern reddit)

- rate limits / 429s

- comment pages that can be huge

The key trick is this:

Don’t scrape the modern JS app HTML. Scrape old.reddit.com (server-rendered, stable).

In this guide we’ll build a scraper that:

- Crawls a subreddit listing (top/new) across multiple pages.

- Extracts post metadata + the post URL.

- Fetches comment threads and parses comments.

- Exports JSONL so you can stream results safely.

Reddit can be rate-limited and geo/IP sensitive at scale. ProxiesAPI helps keep your crawling stable by rotating IPs and smoothing out bursts when you fetch many listing + comment pages.

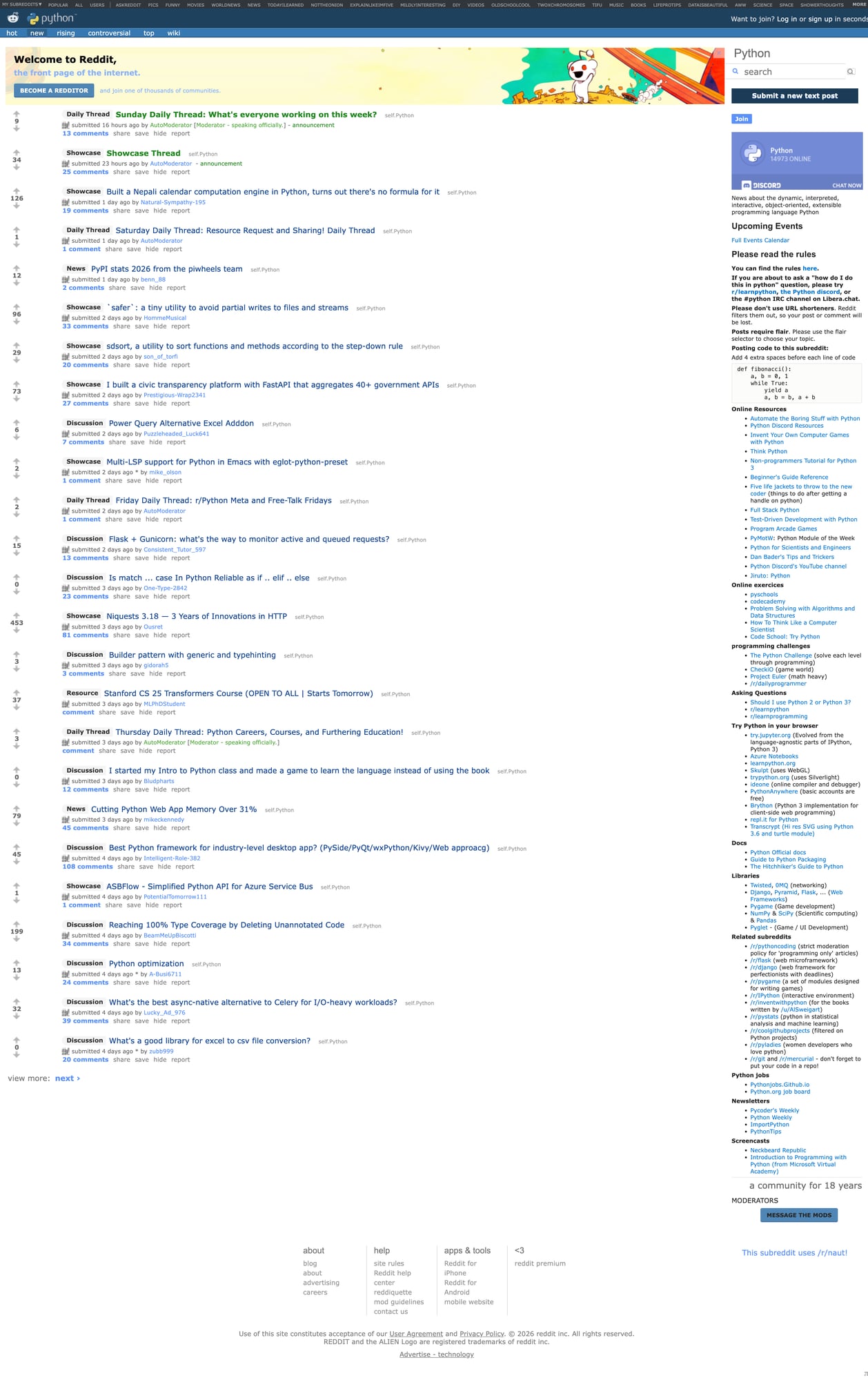

What we’re scraping

Subreddit listing pages

Example targets:

https://old.reddit.com/r/Python/(hot)https://old.reddit.com/r/Python/new/https://old.reddit.com/r/Python/top/?t=week

Pagination uses an after token via a ?after= query.

Comment pages

Each post has a permalink like:

https://old.reddit.com/r/Python/comments/POST_ID/some_title/

Comments are in the server-rendered HTML too.

Setup

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml

Step 1: A robust fetch() with retries + polite delays

When scraping Reddit, you want:

- real

User-Agent - timeouts

- retry/backoff for 429/5xx

import random

import time

from typing import Optional

import requests

TIMEOUT = (10, 30) # connect, read

session = requests.Session()

session.headers.update({

"User-Agent": (

"Mozilla/5.0 (compatible; ProxiesAPI-GuidesBot/1.0; +https://proxiesapi.com)"

)

})

def fetch(url: str, *, max_retries: int = 5) -> str:

last_exc: Optional[Exception] = None

for attempt in range(1, max_retries + 1):

try:

r = session.get(url, timeout=TIMEOUT)

# Basic rate-limit handling

if r.status_code in (429, 503, 502, 500):

sleep = min(30, 2 ** attempt) + random.random()

print(f"HTTP {r.status_code} — backoff {sleep:.1f}s (attempt {attempt}/{max_retries})")

time.sleep(sleep)

continue

r.raise_for_status()

# Small jitter to avoid hammering

time.sleep(0.8 + random.random())

return r.text

except Exception as e:

last_exc = e

sleep = min(30, 2 ** attempt) + random.random()

print(f"error {type(e).__name__} — backoff {sleep:.1f}s")

time.sleep(sleep)

raise RuntimeError("failed to fetch") from last_exc

Step 2: Parse posts from a subreddit listing

On old Reddit, posts are usually div.thing elements.

import re

from bs4 import BeautifulSoup

def clean_text(s: str | None) -> str | None:

if not s:

return None

return re.sub(r"\s+", " ", s).strip() or None

def parse_listing(html: str) -> tuple[list[dict], str | None]:

"""Returns (posts, next_after_token)."""

soup = BeautifulSoup(html, "lxml")

posts = []

for thing in soup.select("div.thing"):

post_id = thing.get("data-fullname") # e.g. t3_xxxxx

permalink = thing.get("data-permalink")

url = thing.get("data-url")

title_el = thing.select_one("a.title")

title = clean_text(title_el.get_text(" ", strip=True) if title_el else None)

author_el = thing.select_one("a.author")

author = clean_text(author_el.get_text(strip=True) if author_el else None)

score_el = thing.select_one("div.score")

score = clean_text(score_el.get("title") if score_el else None) or clean_text(score_el.get_text(strip=True) if score_el else None)

comments_el = thing.select_one("a.comments")

comments_text = clean_text(comments_el.get_text(" ", strip=True) if comments_el else None)

posts.append({

"id": post_id,

"title": title,

"author": author,

"url": url,

"permalink": f"https://old.reddit.com{permalink}" if permalink else None,

"score": score,

"comments_text": comments_text,

})

# Pagination: "next" button often has ?after=TOKEN

next_a = soup.select_one("span.next-button > a")

after = None

if next_a:

href = next_a.get("href", "")

m = re.search(r"[?&]after=([^&]+)", href)

after = m.group(1) if m else None

return posts, after

Step 3: Crawl N pages with dedupe

from urllib.parse import urlencode

def crawl_subreddit(subreddit: str, sort: str = "new", pages: int = 3) -> list[dict]:

base = f"https://old.reddit.com/r/{subreddit}/{sort}/"

out: list[dict] = []

seen = set()

after = None

for p in range(1, pages + 1):

url = base

if after:

url = base + "?" + urlencode({"after": after})

html = fetch(url)

posts, after = parse_listing(html)

added = 0

for post in posts:

key = post.get("id") or post.get("permalink")

if not key or key in seen:

continue

seen.add(key)

out.append(post)

added += 1

print(f"page {p}: got {len(posts)} posts, added {added}, total {len(out)}")

if not after:

break

return out

Step 4: Parse comments from a post page

Comments on old Reddit are in div.comment blocks. We’ll extract:

- author

- score (when available)

- depth level (nesting)

- text

def parse_comments(html: str) -> list[dict]:

soup = BeautifulSoup(html, "lxml")

out = []

for c in soup.select("div.comment"):

# deleted/removed comments can be placeholders

author_el = c.select_one("a.author")

author = clean_text(author_el.get_text(strip=True) if author_el else None)

# nesting depth: stored in a class like "depth-2"

depth = 0

cls = " ".join(c.get("class", []))

m = re.search(r"depth-(\d+)", cls)

if m:

depth = int(m.group(1))

score_el = c.select_one("span.score")

score = clean_text(score_el.get_text(" ", strip=True) if score_el else None)

body_el = c.select_one("div.md")

text = clean_text(body_el.get_text("\n", strip=True) if body_el else None)

# Skip empty placeholders

if not author and not text:

continue

out.append({

"author": author,

"depth": depth,

"score": score,

"text": text,

})

return out

Step 5: Put it together (listings → comments)

This script crawls a subreddit, then fetches comments for the first N posts.

import json

def main():

posts = crawl_subreddit("Python", sort="new", pages=3)

# limit comment fetches so you don’t hammer reddit

max_comment_pages = 10

with open("reddit_posts.jsonl", "w", encoding="utf-8") as f_posts, open(

"reddit_comments.jsonl", "w", encoding="utf-8"

) as f_comments:

for i, post in enumerate(posts[:max_comment_pages], start=1):

f_posts.write(json.dumps(post, ensure_ascii=False) + "\n")

url = post.get("permalink")

if not url:

continue

html = fetch(url)

comments = parse_comments(html)

f_comments.write(

json.dumps(

{"post": {"id": post.get("id"), "permalink": url}, "comments": comments},

ensure_ascii=False,

)

+ "\n"

)

print(f"{i}/{min(len(posts), max_comment_pages)}: comments {len(comments)}")

print("done")

if __name__ == "__main__":

main()

Where ProxiesAPI fits

If you scrape a single subreddit occasionally, you might be fine without proxies.

But if you:

- crawl many subreddits

- fetch lots of comment pages

- run this from servers with “noisy” IP reputations

…you’ll see more 429s and intermittent blocks.

ProxiesAPI can help by:

- rotating IPs across requests

- reducing correlated bursts from a single IP

The golden rule: still keep your crawl polite (bounded concurrency + backoff). Proxies aren’t a license to spam.

Troubleshooting

- If you get 429s: increase delay/jitter, reduce pages, and add longer exponential backoff.

- If markup looks empty: ensure you’re using

old.reddit.com. - If you see consent/age gates: handle those pages explicitly (detect and stop).

Reddit can be rate-limited and geo/IP sensitive at scale. ProxiesAPI helps keep your crawling stable by rotating IPs and smoothing out bursts when you fetch many listing + comment pages.