Scrape Secondhand Fashion Listings from Vinted (Python + ProxiesAPI)

Vinted is one of the most popular secondhand fashion marketplaces in Europe. The listings are valuable for:

- tracking price trends for brands (Nike / Zara / Patagonia)

- monitoring inventory and sell-through

- building “deal finders” and alerts

In this tutorial we’ll scrape Vinted search results pages and extract:

- listing title

- price + currency

- brand (when present)

- item condition / size (often present in the card)

- product URL

- image URL

- pagination across multiple pages

We’ll do it with Python + requests + BeautifulSoup, and we’ll show exactly where ProxiesAPI fits in (honestly) to keep requests stable when you crawl deeper.

Marketplaces throttle quickly when you paginate. ProxiesAPI gives you a stable proxy layer (plus easy rotation) so your scraper keeps running when you scale from 1 page to 100.

Important note (be responsible)

Before you scrape any marketplace:

- read the site’s Terms

- keep your request rate low

- don’t scrape personal data

- use caching (don’t re-fetch the same pages)

This guide is for public listing data and educational purposes.

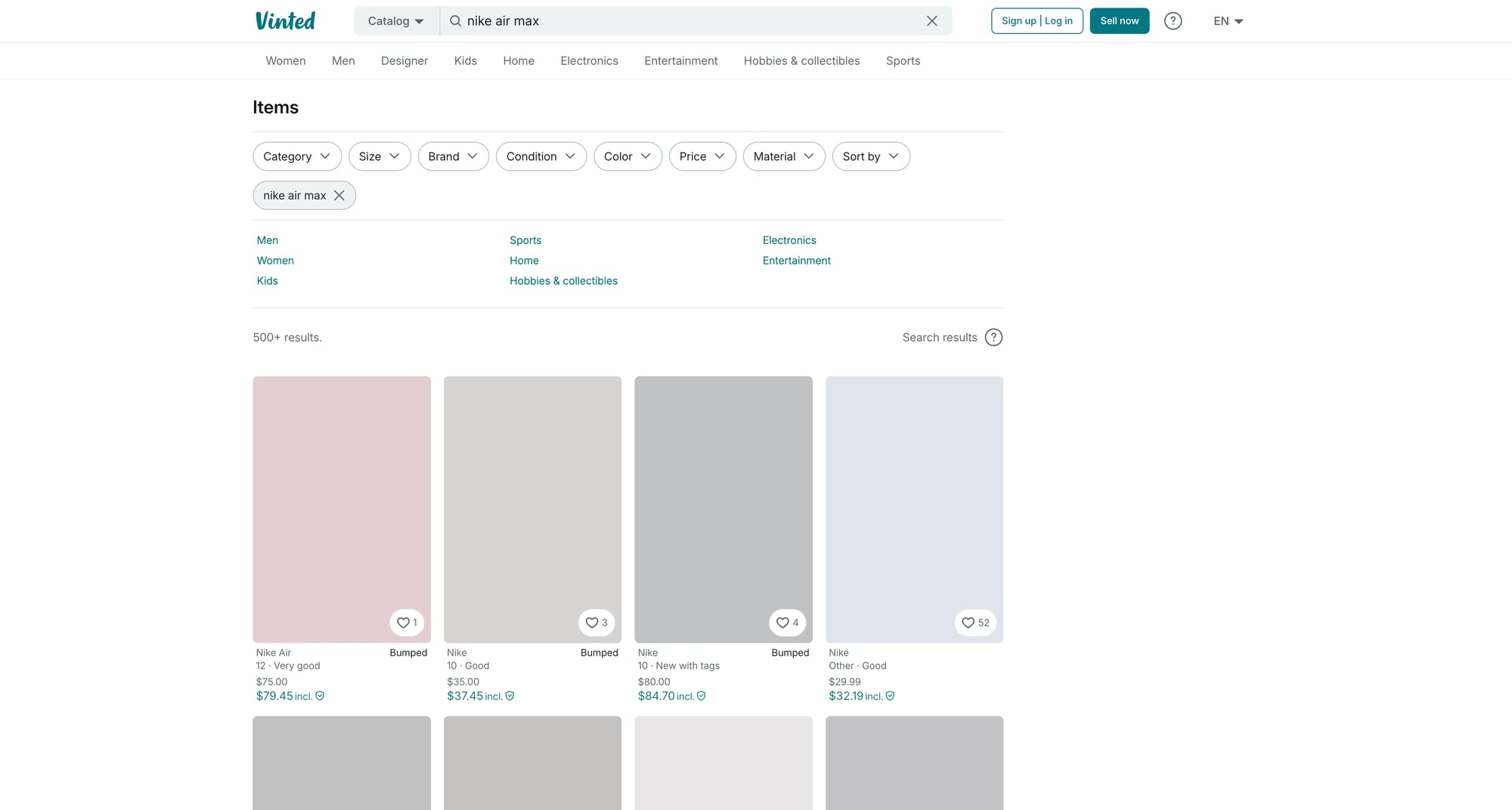

What we’re scraping (URL + structure)

Vinted search pages typically look like:

https://www.vinted.com/catalog?search_text=nike%20air%20max

You may also see country-specific domains (and localized paths). The HTML can vary by region, and parts of the site may be rendered by JS.

Two practical strategies:

- Start with HTML parsing (fast, cheap) and only fall back to browser automation if the data isn’t present.

- Prefer scraping search results over item detail pages first. You can collect item URLs, then selectively enrich details later.

Setup

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml

We’ll use:

requestsfor fetchingBeautifulSoup(lxml)for parsing HTML reliably

Step 1: Fetch a search page (timeouts + headers)

Marketplaces often block “default” HTTP clients. You want:

- real timeouts (no hanging)

- a realistic User-Agent

- retry handling for 403/429/5xx

import time

import random

import requests

TIMEOUT = (10, 30) # connect, read

DEFAULT_HEADERS = {

"User-Agent": (

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/124.0.0.0 Safari/537.36"

),

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "en-US,en;q=0.9",

}

session = requests.Session()

def fetch(url: str) -> str:

r = session.get(url, headers=DEFAULT_HEADERS, timeout=TIMEOUT)

r.raise_for_status()

return r.text

url = "https://www.vinted.com/catalog?search_text=nike%20air%20max"

html = fetch(url)

print("bytes:", len(html))

print(html[:200])

If you immediately hit 403/429, skip ahead to the ProxiesAPI + retries section.

Step 2: Parse listing cards with robust selectors

Vinted’s DOM changes over time. Instead of hard-coding a single fragile selector, we’ll:

- locate “card-like” anchors that link to items

- extract title/price/image from within that card

- keep parsing defensive (missing fields should not crash)

import re

from bs4 import BeautifulSoup

from urllib.parse import urljoin

BASE = "https://www.vinted.com"

def clean_text(x: str | None) -> str | None:

if not x:

return None

t = re.sub(r"\s+", " ", x).strip()

return t or None

def parse_price(text: str | None):

"""Return (amount, currency) when possible."""

if not text:

return None, None

# Examples vary: "€12.00", "12,00 €", "£10", etc.

t = text.replace("\xa0", " ").strip()

# currency symbol first

m = re.search(r"([€£$])\s*([0-9]+(?:[\.,][0-9]{1,2})?)", t)

if m:

return float(m.group(2).replace(",", ".")), m.group(1)

# currency symbol last

m = re.search(r"([0-9]+(?:[\.,][0-9]{1,2})?)\s*([€£$])", t)

if m:

return float(m.group(1).replace(",", ".")), m.group(2)

return None, None

def parse_search_results(html: str) -> list[dict]:

soup = BeautifulSoup(html, "lxml")

out = []

# Heuristic: item links often contain "/items/".

# We collect unique item URLs.

seen = set()

for a in soup.select('a[href*="/items/"]'):

href = a.get("href")

if not href:

continue

item_url = href if href.startswith("http") else urljoin(BASE, href)

if item_url in seen:

continue

seen.add(item_url)

# Title: sometimes in aria-label, sometimes in nested text.

title = clean_text(a.get("title") or a.get("aria-label"))

# Image: look for an <img> inside the anchor

img = a.select_one("img")

img_url = img.get("src") or img.get("data-src") if img else None

# Price: look for obvious price-like text inside the card

price_text = None

# Common approach: scan for elements that include currency symbols

for el in a.select("*"):

t = el.get_text(" ", strip=True)

if t and any(sym in t for sym in ["€", "£", "$"]):

price_text = t

break

price_amount, price_currency = parse_price(price_text)

out.append({

"title": title,

"price": price_amount,

"currency": price_currency,

"price_text": price_text,

"url": item_url,

"image": img_url,

})

return out

items = parse_search_results(html)

print("items:", len(items))

print(items[:2])

Why this works

- We anchor on item URLs (

/items/) which are less likely to change than CSS class names. - We keep extraction “best-effort” (some cards will be missing brand/size/condition).

- We store

price_textfor debugging so you can adjust parsing if the UI changes.

Step 3: Pagination (crawl N result pages)

Vinted pagination patterns vary. A common way is adding a page parameter.

We’ll implement crawling that:

- starts from a base search URL

- increments

page=1..N - deduplicates item URLs across pages

from urllib.parse import urlencode, urlparse, parse_qs, urlunparse

def with_query(url: str, **params) -> str:

u = urlparse(url)

q = parse_qs(u.query)

for k, v in params.items():

q[k] = [str(v)]

new_query = urlencode(q, doseq=True)

return urlunparse((u.scheme, u.netloc, u.path, u.params, new_query, u.fragment))

def crawl_search(base_url: str, pages: int = 3, sleep_range=(0.8, 1.8)) -> list[dict]:

all_items = []

seen = set()

for p in range(1, pages + 1):

url = with_query(base_url, page=p)

html = fetch(url)

batch = parse_search_results(html)

for it in batch:

if not it.get("url") or it["url"] in seen:

continue

seen.add(it["url"])

all_items.append(it)

print(f"page {p}: {len(batch)} items (unique total: {len(all_items)})")

time.sleep(random.uniform(*sleep_range))

return all_items

base = "https://www.vinted.com/catalog?search_text=nike%20air%20max"

data = crawl_search(base, pages=5)

print("unique:", len(data))

Step 4: Export to CSV

import csv

def export_csv(items: list[dict], path: str = "vinted_items.csv"):

fields = ["title", "price", "currency", "price_text", "url", "image"]

with open(path, "w", newline="", encoding="utf-8") as f:

w = csv.DictWriter(f, fieldnames=fields)

w.writeheader()

for it in items:

w.writerow({k: it.get(k) for k in fields})

export_csv(data)

print("wrote vinted_items.csv")

Add ProxiesAPI: retries + rotation (where it actually helps)

If you crawl beyond a couple pages, you’ll likely see:

403 Forbidden(blocked)429 Too Many Requests(rate limited)- intermittent connection resets

This is where a proxy layer helps.

The exact ProxiesAPI endpoint format depends on your account configuration, but the pattern is the same:

- your code calls a single proxy URL

- ProxiesAPI routes to the destination site using a rotating pool

- you keep your parsing logic unchanged

Here’s a clean way to structure it so you can switch between direct and proxied requests.

import os

PROXIESAPI_PROXY_URL = os.getenv("PROXIESAPI_PROXY_URL") # e.g. "http://user:pass@gateway.proxiesapi.com:port"

def get_proxies():

if not PROXIESAPI_PROXY_URL:

return None

return {

"http": PROXIESAPI_PROXY_URL,

"https": PROXIESAPI_PROXY_URL,

}

def fetch_with_retries(url: str, tries: int = 5) -> str:

last_err = None

for attempt in range(1, tries + 1):

try:

r = session.get(

url,

headers=DEFAULT_HEADERS,

timeout=TIMEOUT,

proxies=get_proxies(),

)

# common retry statuses

if r.status_code in (403, 429, 500, 502, 503, 504):

raise requests.HTTPError(f"status {r.status_code}")

r.raise_for_status()

return r.text

except Exception as e:

last_err = e

backoff = min(20, 2 ** attempt) + random.random()

print(f"attempt {attempt}/{tries} failed: {e}; sleeping {backoff:.1f}s")

time.sleep(backoff)

raise RuntimeError(f"failed after {tries} tries: {last_err}")

To use it, replace fetch() inside crawl_search() with fetch_with_retries().

Practical settings

- keep concurrency low (1–2) unless you have a strong reason

- random sleep between pages

- cache HTML responses when iterating on selectors

QA checklist

- Collected item URLs look valid and open in browser

- Price parsing returns numbers for most cards

- Exported CSV opens cleanly in Sheets

- Pagination doesn’t duplicate the same items

- Retries work (you can simulate by temporarily blocking your IP / lowering rate limits)

Next upgrades

- enrich each item URL with a detail-page parser (brand, condition, seller stats)

- store results in SQLite for incremental runs

- add a “changed since last run” diff (so you don’t re-alert on old listings)

If you want, I can adapt the parser to a specific Vinted region + query so the selectors match what you see in your browser.

Marketplaces throttle quickly when you paginate. ProxiesAPI gives you a stable proxy layer (plus easy rotation) so your scraper keeps running when you scale from 1 page to 100.