Scrape App Store Rankings (Python + ProxiesAPI)

App Store rankings are useful for:

- market research (who’s moving up, who’s falling)

- competitor monitoring

- category discovery (new apps that start climbing)

- building “top apps” datasets

In this tutorial we’ll build a practical scraper that produces a daily CSV/JSON of top chart apps for:

- a given country (storefront)

- a given chart type (free/paid/top grossing)

- optionally multiple categories

Then we’ll enrich the dataset with stable identifiers and metadata.

When you pull charts every day across multiple countries/categories, failures add up. ProxiesAPI helps keep the fetch layer consistent so your dataset stays complete.

What we’re scraping: stable endpoints (prefer these)

There are two general ways to get App Store ranking data:

- Unofficial web HTML parsing (fragile; Apple can change it)

- Apple’s feed endpoints (more stable)

For production-ish datasets, choose the feed endpoints.

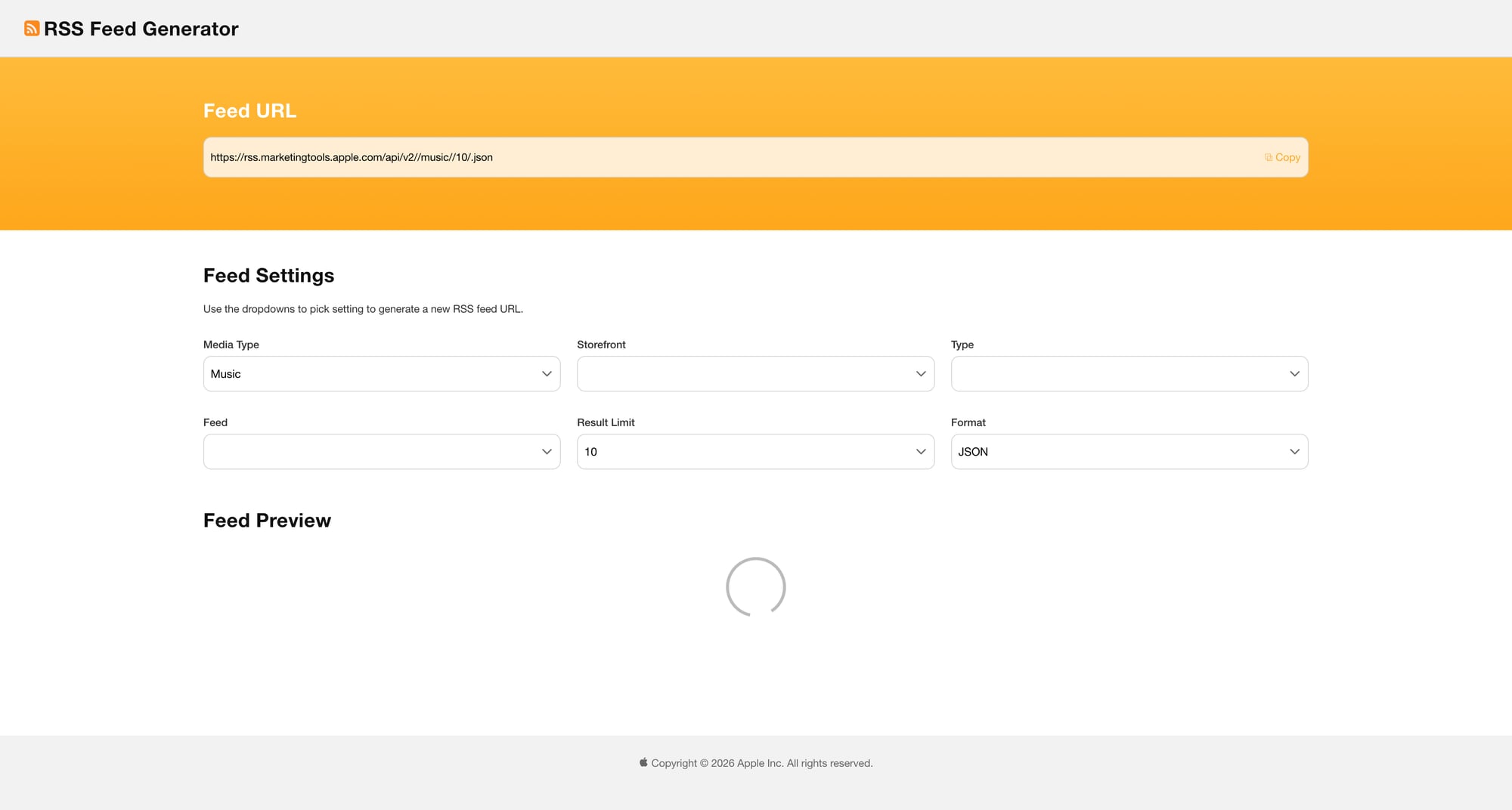

Option A: iTunes RSS / Apple Music-like feeds (JSON)

Apple historically provided RSS feeds for top apps.

Depending on the feed version you may find JSON endpoints like:

- Top Free iPhone Apps:

https://itunes.apple.com/{country}/rss/topfreeapplications/limit=100/json - Top Paid iPhone Apps:

https://itunes.apple.com/{country}/rss/toppaidapplications/limit=100/json

Country is typically a two-letter code like us, gb, in.

These feeds can change over time; if an endpoint 404s, treat it as “endpoint moved” and update the base URL.

Option B: Web pages + parsing (fallback)

Apple also publishes chart pages in the App Store web experience.

You can scrape them, but expect the DOM to change.

In this guide we’ll implement Option A first.

Setup

python3 -m venv .venv

source .venv/bin/activate

pip install requests

We only need requests because the feed is JSON.

Step 1: Fetch a chart via ProxiesAPI

ProxiesAPI canonical fetch:

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"

Python helper:

import json

import requests

from urllib.parse import quote_plus

TIMEOUT = (10, 60)

def proxiesapi_url(target_url: str, api_key: str) -> str:

return f"http://api.proxiesapi.com/?key={quote_plus(api_key)}&url={quote_plus(target_url)}"

def fetch_json(target_url: str, api_key: str) -> dict:

url = proxiesapi_url(target_url, api_key)

r = requests.get(url, timeout=TIMEOUT)

r.raise_for_status()

return r.json()

Pick a chart endpoint. Example (Top Free):

API_KEY = "API_KEY"

COUNTRY = "us"

FEED_URL = f"https://itunes.apple.com/{COUNTRY}/rss/topfreeapplications/limit=100/json"

payload = fetch_json(FEED_URL, API_KEY)

print(payload.keys())

Step 2: Parse ranks, app ids, names, developers

Typical RSS JSON structure includes a feed with entry items.

We’ll normalize into rows with:

rank(1..N)app_id(stable numeric id)namedeveloperapp_urlcountrychartdate

from datetime import date

def parse_top_feed(payload: dict, *, country: str, chart: str) -> list[dict]:

feed = payload.get("feed") or {}

entries = feed.get("entry") or []

rows = []

for idx, e in enumerate(entries, start=1):

name = (e.get("im:name") or {}).get("label")

developer = (e.get("im:artist") or {}).get("label")

# app id usually in id.attributes["im:id"]

app_id = None

id_obj = e.get("id") or {}

attrs = id_obj.get("attributes") or {}

app_id = attrs.get("im:id")

app_url = (id_obj.get("label") if isinstance(id_obj, dict) else None)

rows.append({

"rank": idx,

"app_id": app_id,

"name": name,

"developer": developer,

"app_url": app_url,

"country": country,

"chart": chart,

"pulled_at": date.today().isoformat(),

})

return rows

rows = parse_top_feed(payload, country=COUNTRY, chart="topfreeapplications")

print("rows:", len(rows))

print(rows[0])

Step 3: Enrich with app metadata (lookup by app id)

Ranks are useful, but for a durable dataset you’ll usually want more fields:

- primary genre/category

- bundle id

- current version

- release date

- price

- rating count

- average rating

A common technique is to call Apple’s lookup endpoint:

https://itunes.apple.com/lookup?id=APP_ID&country=us

This often returns a JSON object with results.

We’ll implement a simple enrich step that batches ids (and throttles).

import time

def lookup_app(app_id: str, api_key: str, country: str) -> dict | None:

url = f"https://itunes.apple.com/lookup?id={app_id}&country={country}"

data = fetch_json(url, api_key)

results = data.get("results") or []

return results[0] if results else None

def enrich_rows(rows: list[dict], api_key: str, country: str, *, sleep_s: float = 0.3) -> list[dict]:

out = []

for r in rows:

app_id = r.get("app_id")

meta = lookup_app(app_id, api_key, country) if app_id else None

enriched = dict(r)

if meta:

enriched.update({

"bundle_id": meta.get("bundleId"),

"primary_genre": meta.get("primaryGenreName"),

"genres": meta.get("genres"),

"seller_name": meta.get("sellerName"),

"version": meta.get("version"),

"current_version_release_date": meta.get("currentVersionReleaseDate"),

"release_date": meta.get("releaseDate"),

"price": meta.get("price"),

"currency": meta.get("currency"),

"average_user_rating": meta.get("averageUserRating"),

"user_rating_count": meta.get("userRatingCount"),

})

out.append(enriched)

time.sleep(sleep_s)

return out

If you’re pulling 100 apps across many countries, do lookups in a separate job and cache results by app_id.

Step 4: Export daily dataset

import csv

import json

def export_csv(rows: list[dict], path: str) -> None:

if not rows:

return

keys = sorted({k for r in rows for k in r.keys()})

with open(path, "w", newline="", encoding="utf-8") as f:

w = csv.DictWriter(f, fieldnames=keys)

w.writeheader()

w.writerows(rows)

def export_json(rows: list[dict], path: str) -> None:

with open(path, "w", encoding="utf-8") as f:

json.dump(rows, f, ensure_ascii=False, indent=2)

Putting it together:

API_KEY = "API_KEY"

COUNTRY = "us"

CHART = "topfreeapplications"

feed_url = f"https://itunes.apple.com/{COUNTRY}/rss/{CHART}/limit=100/json"

payload = fetch_json(feed_url, API_KEY)

rows = parse_top_feed(payload, country=COUNTRY, chart=CHART)

rows = enrich_rows(rows[:25], API_KEY, COUNTRY) # enrich a subset to start

export_csv(rows, f"app_store_{COUNTRY}_{CHART}.csv")

export_json(rows, f"app_store_{COUNTRY}_{CHART}.json")

print("saved", len(rows))

Handling failures (retries, partial days)

For daily pipelines you want two guarantees:

- you never “hang”

- a partial failure doesn’t erase the day

Practical tips:

- always set timeouts (connect + read)

- store raw payloads for debugging (S3 / local)

- write outputs to a date-partitioned folder (

YYYY-MM-DD/) - if enrich step fails, still keep the ranking-only dataset

QA checklist

- Chart endpoint returns JSON (not HTML)

- Rank count matches expected limit (e.g., 100)

-

app_idis present for each row - Lookup enrichment adds stable fields (bundle id, genre)

- Export files open cleanly

Final thoughts

App Store charts are a great “daily pull” dataset: structured, comparable over time, and high signal.

Start with one country + one chart.

Once it’s stable, expand the matrix:

- more countries

- more chart types

- scheduled runs

- cached metadata enrichment

That’s the difference between “a script” and a dataset.

When you pull charts every day across multiple countries/categories, failures add up. ProxiesAPI helps keep the fetch layer consistent so your dataset stays complete.