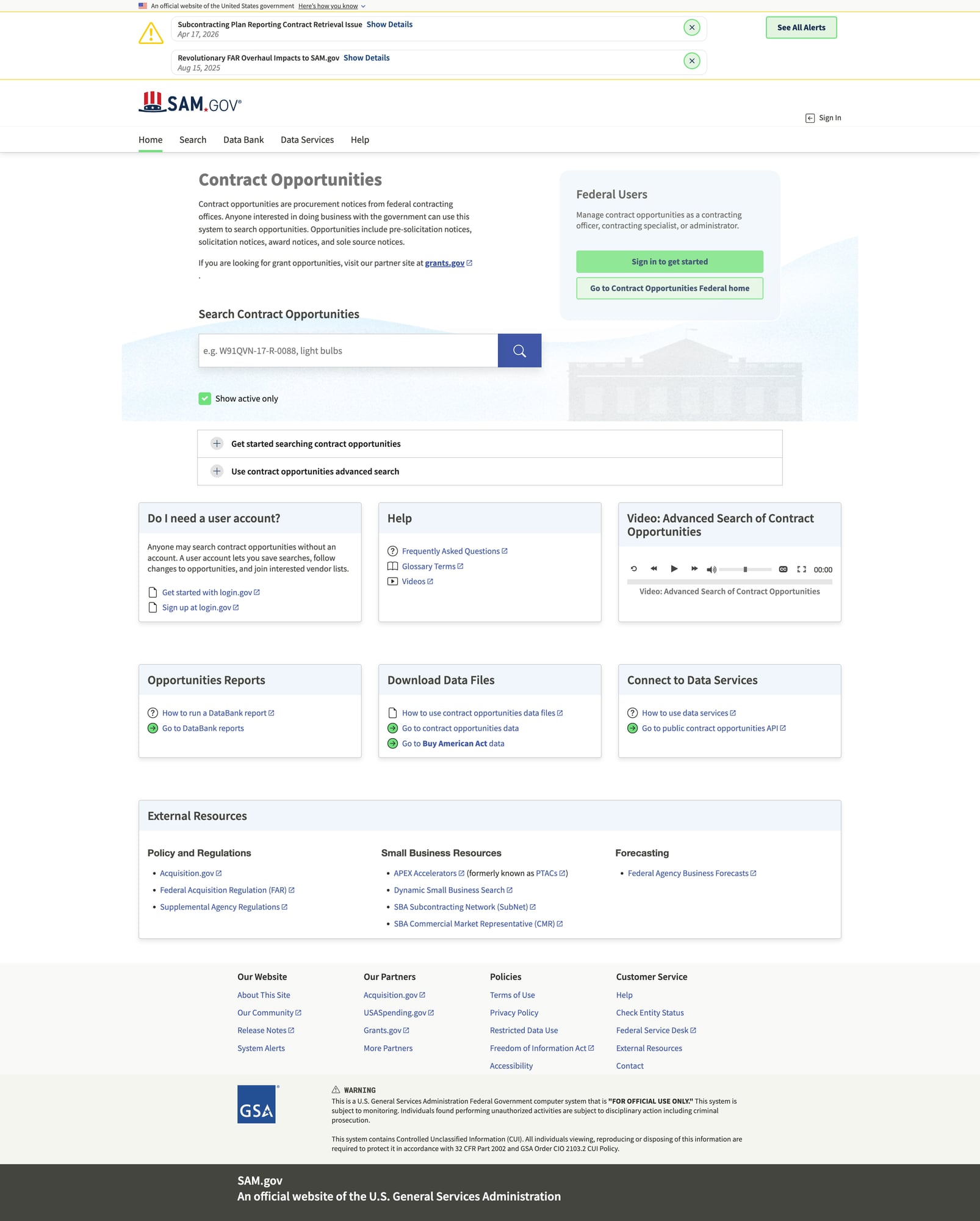

Scrape SAM.gov Contract Opportunities with Python (Search API + Dataset + Screenshots)

SAM.gov is the official U.S. government system for contract opportunities. The good news: unlike many “scrape-only” targets, SAM.gov provides a documented API for pulling opportunities.

So instead of reverse-engineering HTML, we’ll build a clean dataset builder around the official endpoint:

- query opportunities with filters (date range, keywords, set-asides, NAICS)

- paginate reliably

- normalize the results into a tidy schema

- export JSONL (stream-friendly) and CSV

We’ll also capture screenshots of the SAM.gov UI so it’s easy to validate what the API data corresponds to.

SAM.gov offers an official API for opportunities — use it first. When your workflow expands to crawling attachment pages, vendor sites, and linked documents, ProxiesAPI helps make the network layer reliable (timeouts, retries, IP rotation) without rewriting your scraper.

Important notes (before you touch the API)

-

Get an API key

- SAM.gov uses API keys for access.

- You’ll pass it as a header, usually

X-Api-Key.

-

Prefer the API over HTML

- HTML scraping is more brittle.

- The API is designed for exactly this workflow.

-

Respect rate limits

- Keep reasonable pagination and caching.

- Retry politely on transient errors.

Step 1: Environment and dependencies

python -m venv .venv

source .venv/bin/activate

pip install requests tenacity python-dateutil pandas

Step 2: Define the Opportunities API client

SAM.gov’s API surface evolves, but the common pattern is:

- base:

https://api.sam.gov/ - opportunities search endpoint (varies by version)

- request parameters for filters + pagination

We’ll implement a client where the endpoint URL is configurable so you can adjust it if SAM changes versions.

Create a file samgov_opps.py:

import os

import time

import random

from dataclasses import dataclass

from typing import Any

import requests

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

@dataclass

class SamGovConfig:

api_key: str

base_url: str = "https://api.sam.gov"

# Common opportunities endpoint (update if SAM publishes a different path/version)

opportunities_path: str = "/opportunities/v2/search"

timeout: tuple[int, int] = (10, 45) # connect, read

min_delay_s: float = 0.4

max_delay_s: float = 1.2

class SamGovClient:

def __init__(self, cfg: SamGovConfig):

self.cfg = cfg

self.session = requests.Session()

self.session.headers.update({

"Accept": "application/json",

"User-Agent": "proxiesapi-guides/1.0 (+https://proxiesapi.com)",

"X-Api-Key": cfg.api_key,

})

def _polite_sleep(self):

time.sleep(random.uniform(self.cfg.min_delay_s, self.cfg.max_delay_s))

@retry(

stop=stop_after_attempt(6),

wait=wait_exponential(multiplier=1, min=2, max=30),

retry=retry_if_exception_type((requests.RequestException,)),

reraise=True,

)

def get(self, path: str, params: dict[str, Any]) -> dict:

self._polite_sleep()

url = self.cfg.base_url.rstrip("/") + path

r = self.session.get(url, params=params, timeout=self.cfg.timeout)

r.raise_for_status()

return r.json()

def load_client() -> SamGovClient:

api_key = os.getenv("SAMGOV_API_KEY")

if not api_key:

raise RuntimeError("Missing SAMGOV_API_KEY in environment")

return SamGovClient(SamGovConfig(api_key=api_key))

This client gives you:

- retries

- timeouts

- jittered delays

That’s the foundation for “it runs overnight” rather than “it runs once”.

Step 3: Search opportunities with filters

You typically want to filter by:

- posted date range

- keyword text

- set-aside types

- place of performance

- NAICS codes

We’ll implement a minimal, sane default search:

- last N days

- optional keyword

- page size

from datetime import datetime, timedelta, timezone

def iso_date(d: datetime) -> str:

# SAM endpoints often accept YYYY-MM-DD or ISO8601; keep it simple.

return d.strftime("%Y-%m-%d")

def search_opportunities(

client: SamGovClient,

keyword: str | None = None,

days_back: int = 7,

page_size: int = 100,

offset: int = 0,

) -> dict:

end = datetime.now(timezone.utc)

start = end - timedelta(days=days_back)

params = {

"limit": page_size,

"offset": offset,

# Parameter names vary by version; keep these configurable if needed.

"postedFrom": iso_date(start),

"postedTo": iso_date(end),

}

if keyword:

params["q"] = keyword

return client.get(client.cfg.opportunities_path, params=params)

Inspect one response

if __name__ == "__main__":

client = load_client()

data = search_opportunities(client, keyword="software", days_back=3, page_size=25)

print(data.keys())

If this fails with a 404 or schema mismatch, SAM has likely moved the endpoint path/params.

Fix: update opportunities_path and param names to match current SAM documentation.

Step 4: Normalize results into a tidy schema

API responses usually contain nested objects. For analysis, you want flat, consistent rows.

Here’s a normalizer that tries to extract fields that commonly exist:

- notice id

- title

- solicitation number

- agency / office

- posted date

- response due date

- NAICS

- place of performance

- URL

from dateutil import parser as dtparse

def safe_dt(s: str | None):

if not s:

return None

try:

return dtparse.parse(s).isoformat()

except Exception:

return s

def normalize_notice(n: dict) -> dict:

return {

"notice_id": n.get("noticeId") or n.get("id") or n.get("notice_id"),

"title": n.get("title") or n.get("noticeTitle"),

"solicitation_number": n.get("solicitationNumber") or n.get("solicitation_number"),

"agency": (n.get("department") or n.get("agency") or {}).get("name")

if isinstance(n.get("department") or n.get("agency"), dict)

else (n.get("department") or n.get("agency")),

"office": (n.get("office") or {}).get("name") if isinstance(n.get("office"), dict) else n.get("office"),

"posted_date": safe_dt(n.get("postedDate") or n.get("posted_date")),

"response_deadline": safe_dt(n.get("responseDeadLine") or n.get("response_deadline") or n.get("dueDate")),

"naics": n.get("naics") or n.get("naicsCode"),

"place_of_performance": n.get("placeOfPerformance") or n.get("place_of_performance"),

"type": n.get("type"),

"set_aside": n.get("setAside") or n.get("set_aside"),

"ui_url": n.get("uiLink") or n.get("url") or n.get("link"),

"raw": n,

}

Step 5: Pagination (crawl all pages)

Most SAM endpoints use offset + limit.

We’ll keep crawling until we get fewer than limit items.

import json

from typing import Iterable

def iter_notices(payload: dict) -> list[dict]:

# Different versions use different keys.

for key in ["opportunities", "data", "results", "notices"]:

v = payload.get(key)

if isinstance(v, list):

return v

return []

def crawl_all(

client: SamGovClient,

keyword: str | None = None,

days_back: int = 7,

page_size: int = 100,

max_pages: int = 50,

) -> list[dict]:

out = []

for page in range(max_pages):

offset = page * page_size

payload = search_opportunities(

client,

keyword=keyword,

days_back=days_back,

page_size=page_size,

offset=offset,

)

notices = iter_notices(payload)

print(f"page {page+1}: {len(notices)}")

for n in notices:

out.append(normalize_notice(n))

if len(notices) < page_size:

break

return out

if __name__ == "__main__":

client = load_client()

rows = crawl_all(client, keyword="cybersecurity", days_back=14, page_size=50)

print("rows:", len(rows))

print(json.dumps(rows[0], indent=2)[:800])

Step 6: Export to JSONL + CSV

import pandas as pd

def export(rows: list[dict], stem: str = "samgov_opportunities"):

jsonl_path = f"{stem}.jsonl"

with open(jsonl_path, "w", encoding="utf-8") as f:

for r in rows:

# avoid duplicating raw huge objects in CSV workflows if you don’t need them

f.write(json.dumps(r, ensure_ascii=False) + "\n")

# flatten: drop raw for CSV by default

flat = [{k: v for k, v in r.items() if k != "raw"} for r in rows]

df = pd.DataFrame(flat)

csv_path = f"{stem}.csv"

df.to_csv(csv_path, index=False)

print("wrote", jsonl_path, "and", csv_path, "rows:", len(rows))

Optional: Crawl linked attachments (where ProxiesAPI helps)

The SAM API gets you opportunity records. But real workflows often also:

- download attachments (PDFs, Word docs)

- crawl vendor/agency pages linked from notices

- follow “additional info” URLs

Those are classic “web scraping” tasks and can be much less stable than the API.

If you add an attachments crawler, that’s where ProxiesAPI is useful:

- consistent proxy configuration across many target domains

- fewer failures on large batch downloads

- IP rotation when a domain throttles you

QA checklist

- you can run 1 request and see a JSON payload

- crawling prints pages and stops naturally

-

notice_idandtitlelook correct for 10 samples - exports open in your spreadsheet tool

- if endpoint/params changed, you updated

opportunities_pathand param names

A quick “do not do this” list

- Don’t scrape HTML when the API gives you the data.

- Don’t parallelize aggressively; start with serial pagination.

- Don’t store raw PII or sensitive fields unnecessarily.

If you build around the API first, you’ll end up with a cleaner, more robust dataset pipeline — and you’ll only need scraping/proxies for the parts that truly require it.

SAM.gov offers an official API for opportunities — use it first. When your workflow expands to crawling attachment pages, vendor sites, and linked documents, ProxiesAPI helps make the network layer reliable (timeouts, retries, IP rotation) without rewriting your scraper.