Scrape Hotel Prices from Booking.com (Python) — Dates, Room Types, Total Price

Booking.com search results are a great example of “real-world scraping”:

- the layout is structured (hotel cards)

- price changes with dates and occupancy

- pages can be localized and A/B tested

In this guide we’ll build a Booking.com hotel price scraper in Python that:

- constructs a search URL with:

- destination

- check-in / check-out

- adults / rooms

- extracts hotel cards with:

- name

- address (best-effort)

- review score (best-effort)

- displayed “total price” (best-effort)

- optionally follows a hotel page to extract a few room offer rows:

- room type

- total price

We’ll keep it honest: Booking.com uses dynamic rendering and varies by region. This tutorial focuses on parsing the HTML you get and adding the guardrails you need in production.

Travel sites often throttle high-volume crawls. ProxiesAPI gives you a consistent proxy layer and rotation so you can retry and spread load. You still need respectful rates and robust parsing.

Responsible scraping note

Travel inventory is sensitive. Avoid:

- hammering the site

- scraping personal data

- violating applicable terms/laws

If you need large-scale pricing data, consider licensed providers.

Step 0: Build a Booking.com search URL with dates + occupancy

Booking.com search URLs often look like this (simplified):

https://www.booking.com/searchresults.html?ss=New+York&checkin=2026-06-10&checkout=2026-06-12&group_adults=2&no_rooms=1

We’ll generate URLs with urllib.parse so it’s predictable.

from urllib.parse import urlencode

def build_search_url(

destination: str,

checkin: str,

checkout: str,

adults: int = 2,

rooms: int = 1,

currency: str = "USD",

lang: str = "en-us",

) -> str:

base = f"https://www.booking.com/searchresults.html"

params = {

"ss": destination,

"checkin": checkin,

"checkout": checkout,

"group_adults": adults,

"no_rooms": rooms,

"selected_currency": currency,

"lang": lang,

}

return base + "?" + urlencode(params)

print(

build_search_url(

"New York",

"2026-06-10",

"2026-06-12",

adults=2,

rooms=1,

)

)

Step 1: Fetch HTML with retries + block detection

Booking.com can return:

- consent pages

- bot checks

- localized variants

So we:

- set realistic headers

- implement retries/backoff

- treat suspicious pages as retryable

import random

import time

from dataclasses import dataclass

import requests

TIMEOUT = (10, 40)

USER_AGENTS = [

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/124.0 Safari/537.36",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/124.0 Safari/537.36",

]

@dataclass

class FetchResult:

url: str

status_code: int

text: str

def looks_blocked(html: str) -> bool:

if not html:

return True

h = html.lower()

needles = [

"are you a robot",

"captcha",

"verify you are a human",

"access denied",

"consent",

]

return any(n in h for n in needles)

def fetch(session: requests.Session, url: str, max_retries: int = 4) -> FetchResult:

last_exc = None

for attempt in range(1, max_retries + 1):

try:

headers = {

"User-Agent": random.choice(USER_AGENTS),

"Accept-Language": "en-US,en;q=0.9",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

}

# --- ProxiesAPI integration point ---

# If ProxiesAPI gives you a proxy URL/rotating endpoint, wire it here.

# proxies = {"http": PROXY_URL, "https": PROXY_URL}

# r = session.get(url, headers=headers, timeout=TIMEOUT, proxies=proxies)

# -----------------------------------

r = session.get(url, headers=headers, timeout=TIMEOUT)

text = r.text or ""

if r.status_code in (429, 503) or looks_blocked(text):

raise RuntimeError(f"blocked_or_throttled status={r.status_code}")

r.raise_for_status()

return FetchResult(url=url, status_code=r.status_code, text=text)

except Exception as e:

last_exc = e

sleep_s = min(15, 1.7 ** attempt) + random.random()

print(f"attempt {attempt}/{max_retries} failed: {e} — sleeping {sleep_s:.1f}s")

time.sleep(sleep_s)

raise RuntimeError(f"failed after {max_retries} retries: {url}") from last_exc

Step 2: Parse hotel cards (name, link, score, price)

Booking.com’s markup changes, so we parse with:

- robust attribute selectors

- fallback paths

Common patterns:

- property cards often have a

data-testidlikeproperty-card - the title might be in an element with

data-testid="title" - the price might be in

data-testid="price-and-discounted-price"

We’ll extract what’s on the results page first.

import re

from bs4 import BeautifulSoup

from urllib.parse import urljoin

BASE = "https://www.booking.com"

def clean_text(s: str) -> str:

return re.sub(r"\s+", " ", (s or "").strip())

def parse_money(text: str):

if not text:

return None

# Pull a number; currency symbols/locales vary

m = re.search(r"(\d[\d,.]*)", text)

if not m:

return None

val = m.group(1).replace(",", "")

try:

return float(val)

except Exception:

return None

def parse_search_results(html: str):

soup = BeautifulSoup(html, "lxml")

cards = soup.select('[data-testid="property-card"]')

out = []

for c in cards:

title_el = c.select_one('[data-testid="title"]')

name = clean_text(title_el.get_text(" ", strip=True)) if title_el else None

link_el = c.select_one('a[data-testid="title-link"]') or c.select_one("a")

href = link_el.get("href") if link_el else None

url = urljoin(BASE, href) if href else None

addr_el = c.select_one('[data-testid="address"]')

address = clean_text(addr_el.get_text(" ", strip=True)) if addr_el else None

score_el = c.select_one('[data-testid="review-score"]')

score_text = clean_text(score_el.get_text(" ", strip=True)) if score_el else None

price_el = c.select_one('[data-testid="price-and-discounted-price"]')

price_text = clean_text(price_el.get_text(" ", strip=True)) if price_el else None

total_price = parse_money(price_text)

if not name and not url:

continue

out.append(

{

"name": name,

"url": url,

"address": address,

"review_score_text": score_text,

"total_price_text": price_text,

"total_price_value": total_price,

}

)

return out

Sanity check run

import json

import requests

if __name__ == "__main__":

url = build_search_url(

"New York",

"2026-06-10",

"2026-06-12",

adults=2,

rooms=1,

)

s = requests.Session()

res = fetch(s, url)

hotels = parse_search_results(res.text)

print("hotels parsed:", len(hotels))

print("first:", hotels[0] if hotels else None)

with open("booking_hotels.json", "w", encoding="utf-8") as f:

json.dump(hotels, f, ensure_ascii=False, indent=2)

Step 3 (optional): Extract room types + offer prices from a hotel page

Room offer tables are often more dynamic and may require JavaScript.

But you can still:

- fetch the hotel page HTML

- look for recognizable blocks (e.g., “room name”/“price” pairs)

- treat it as best-effort

Here’s a starter parser that tries a couple of patterns.

from bs4 import BeautifulSoup

def parse_room_offers(hotel_html: str, max_offers: int = 10):

soup = BeautifulSoup(hotel_html, "lxml")

offers = []

# Pattern 1: elements with data-testid hints

rows = soup.select('[data-testid="room-row"]')

for r in rows:

room_el = r.select_one('[data-testid="room-name"]')

price_el = r.select_one('[data-testid="price-and-discounted-price"]')

room = room_el.get_text(" ", strip=True) if room_el else None

price_text = price_el.get_text(" ", strip=True) if price_el else None

if room or price_text:

offers.append({"room_type": room, "total_price_text": price_text})

if len(offers) >= max_offers:

return offers

# Pattern 2: fallback — look for common “Room” labels

for el in soup.select("div, span"):

txt = el.get_text(" ", strip=True)

if txt and len(txt) < 80 and "room" in txt.lower():

# This is noisy; keep it as a last resort

continue

return offers

If you consistently need room-level pricing, the pragmatic route is:

- Playwright (headful/headless)

- with a small request budget and screenshots for debugging

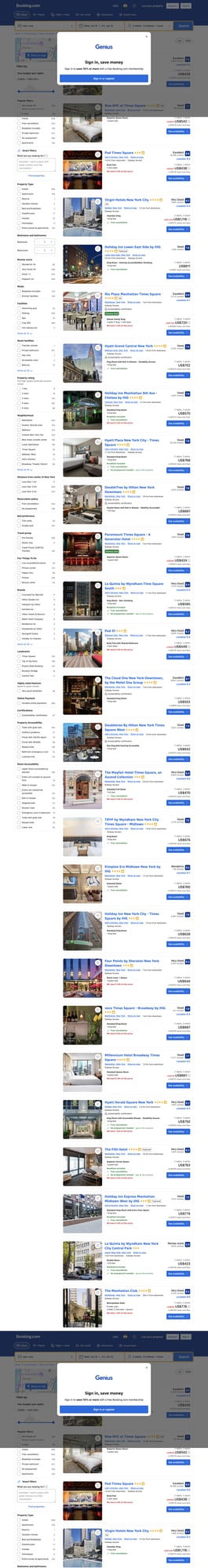

Screenshot-based verification (why it matters)

Sites change. A screenshot is your “receipt” that:

- the page loaded

- your query (dates/occupancy) is correct

- the prices you parsed match what a human sees

This post includes a screenshot of a live Booking.com results page so you can cross-check quickly.

Where ProxiesAPI fits (without overclaiming)

ProxiesAPI helps you:

- rotate IPs across requests

- reduce per-IP throttling

- retry with a consistent proxy layer

It does not guarantee you’ll bypass:

- bot checks

- JavaScript-only rendering

- consent flows

Use it as one part of a stable scraping stack.

QA checklist

- Your search URL includes check-in/check-out and adult/room counts

- Parsed hotel count roughly matches what you see (not zero)

- Block/consent pages are detected and retried

- You store raw HTML snapshots for debugging

- You keep rate limits conservative

Next upgrades

- Persist to SQLite keyed by hotel URL

- Add “changed price” alerts

- Add Playwright fallback for room-offer parsing

- Add rotating sessions (sticky IP) for multi-step flows

Travel sites often throttle high-volume crawls. ProxiesAPI gives you a consistent proxy layer and rotation so you can retry and spread load. You still need respectful rates and robust parsing.