Scrape Stack Overflow Questions and Answers by Tag (Python + ProxiesAPI)

Stack Overflow is a great scraping target because it’s mostly server-rendered HTML with consistent structure.

In this tutorial we’ll build a real Python scraper that:

- crawls a tag feed (e.g.

python,javascript,docker) - paginates multiple pages

- visits each question page

- extracts:

- title

- tags

- score / vote count

- asked date (best-effort)

- accepted answer (text + score when available)

- exports to JSON Lines

Stack Overflow is mostly server-rendered HTML, but at scale you’ll hit throttles and intermittent blocks. ProxiesAPI helps keep tag crawls stable as you paginate and fetch thousands of question pages.

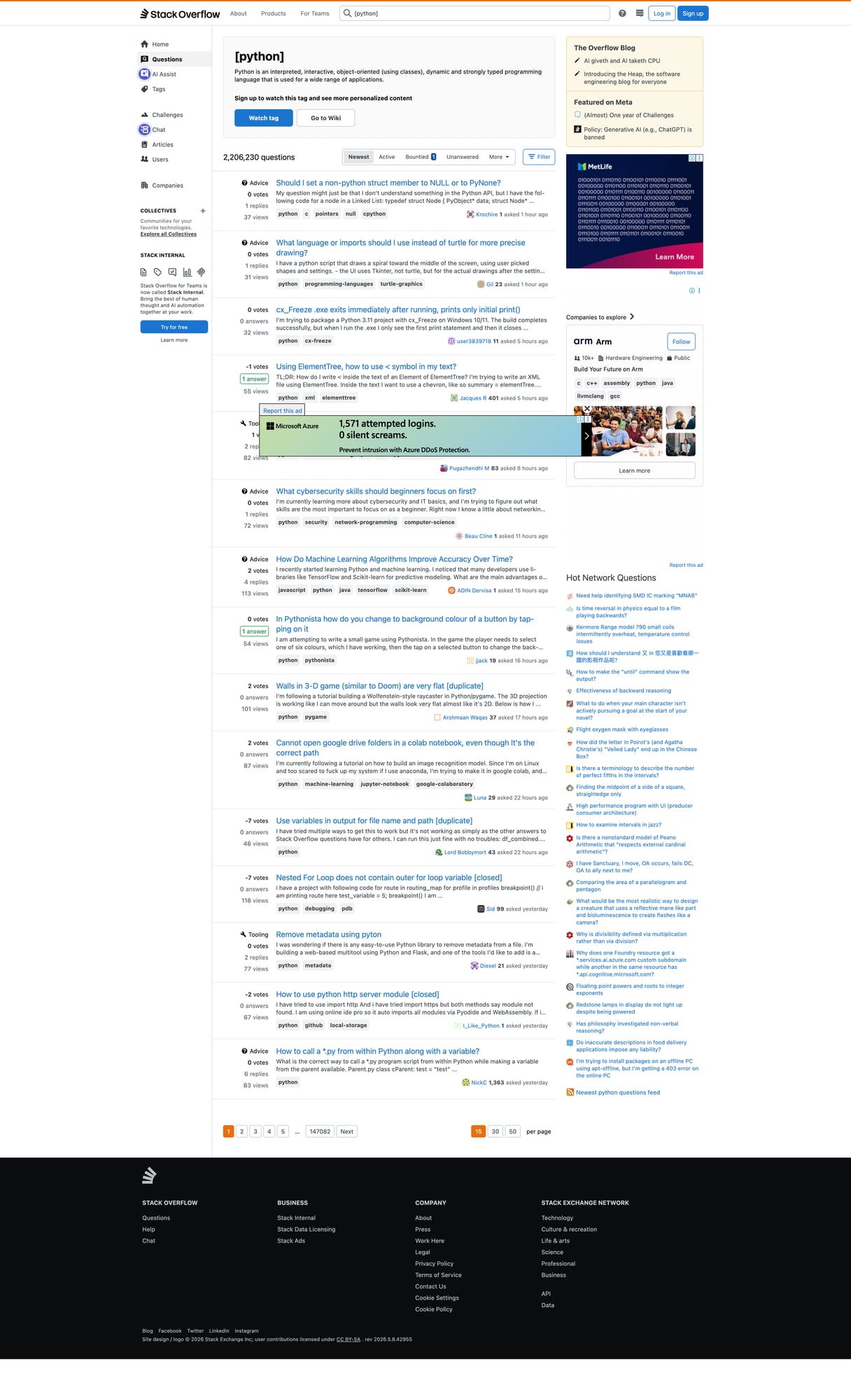

What we’re scraping

Two page types:

- Tag feed (list of questions)

https://stackoverflow.com/questions/tagged/python?tab=Newest&page=1&pagesize=15

- Question page (question + answers)

https://stackoverflow.com/questions/QUESTION_ID/some-slug

We’ll scrape the tag feed to collect question URLs, then scrape each question page for details.

Setup

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml tenacity

Step 1: Fetch with sessions + timeouts + retries (and ProxiesAPI)

As your crawl grows (many pages + many question pages), the network layer is what breaks first.

We’ll:

- use

requests.Session() - set realistic headers

- configure ProxiesAPI via environment variables

- retry on transient failures (429/5xx)

import os

import time

import random

import requests

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

TIMEOUT = (10, 30)

HEADERS = {

"User-Agent": (

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/125.0.0.0 Safari/537.36"

),

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "en-US,en;q=0.9",

}

def build_proxies_from_env() -> dict | None:

p = os.getenv("PROXIESAPI_PROXY_URL")

if p:

return {"http": p, "https": p}

http_p = os.getenv("PROXIESAPI_HTTP_PROXY")

https_p = os.getenv("PROXIESAPI_HTTPS_PROXY")

if http_p or https_p:

return {"http": http_p or https_p, "https": https_p or http_p}

return None

session = requests.Session()

session.headers.update(HEADERS)

class FetchError(RuntimeError):

pass

@retry(

reraise=True,

stop=stop_after_attempt(4),

wait=wait_exponential(multiplier=1, min=1, max=12),

retry=retry_if_exception_type((requests.RequestException, FetchError)),

)

def fetch_html(url: str) -> str:

proxies = build_proxies_from_env()

r = session.get(url, timeout=TIMEOUT, proxies=proxies)

if r.status_code in (429, 403):

raise FetchError(f"blocked/throttled: HTTP {r.status_code}")

if r.status_code >= 500:

raise FetchError(f"server error: HTTP {r.status_code}")

r.raise_for_status()

return r.text

def polite_sleep(min_s: float = 0.6, max_s: float = 1.6) -> None:

time.sleep(random.uniform(min_s, max_s))

Step 2: Crawl a tag feed (pagination)

Stack Overflow’s tag list pages have predictable structure.

A robust way to scrape is:

- select the question summary containers

- extract the title link (relative URL)

- normalize to full URL

from urllib.parse import urljoin

from bs4 import BeautifulSoup

BASE = "https://stackoverflow.com"

def tag_page_url(tag: str, page: int = 1, pagesize: int = 15) -> str:

# Newest tab is most useful for incremental crawls

return (

f"{BASE}/questions/tagged/{tag}"

f"?tab=Newest&page={page}&pagesize={pagesize}"

)

def parse_tag_page(html: str) -> list[str]:

soup = BeautifulSoup(html, "lxml")

urls = []

# New SO layout uses .s-post-summary for list items.

for summary in soup.select("div.s-post-summary"):

a = summary.select_one("h3 a.s-link")

if not a:

continue

href = a.get("href")

if not href:

continue

urls.append(urljoin(BASE, href))

# Fallback for older structure

if not urls:

for q in soup.select("div.question-summary, div.question-summary\"):

a = q.select_one("h3 a")

if a and a.get("href"):

urls.append(urljoin(BASE, a.get("href")))

# De-dupe

out = []

seen = set()

for u in urls:

if u not in seen:

seen.add(u)

out.append(u)

return out

def crawl_tag(tag: str, pages: int = 3) -> list[str]:

all_urls = []

seen = set()

for p in range(1, pages + 1):

url = tag_page_url(tag, page=p)

html = fetch_html(url)

batch = parse_tag_page(html)

for u in batch:

if u in seen:

continue

seen.add(u)

all_urls.append(u)

print("tag", tag, "page", p, "found", len(batch), "total", len(all_urls))

polite_sleep()

return all_urls

Step 3: Parse a question page (title, votes, tags, accepted answer)

On a question page we’ll extract:

- title

- tags

- score (vote count)

- accepted answer (when present)

Selectors evolve, so we’ll use defensive parsing with fallbacks.

import re

from bs4 import BeautifulSoup

INT_RE = re.compile(r"-?\d+")

def parse_int(text: str) -> int | None:

if not text:

return None

m = INT_RE.search(text.replace(",", ""))

return int(m.group(0)) if m else None

def parse_question_page(html: str, url: str) -> dict:

soup = BeautifulSoup(html, "lxml")

# Title

title_el = soup.select_one("h1 a.question-hyperlink") or soup.select_one("h1")

title = title_el.get_text(" ", strip=True) if title_el else None

# Tags

tags = [t.get_text(strip=True) for t in soup.select("a.post-tag")]

tags = [t for t in tags if t]

# Score (question)

score = None

score_el = soup.select_one("div.js-vote-count")

if score_el:

score = parse_int(score_el.get("data-value") or score_el.get_text(" ", strip=True))

# Accepted answer

accepted = None

# Common pattern: accepted answer has itemprop="acceptedAnswer" OR .accepted-answer

accepted_block = (

soup.select_one("div.answer.accepted-answer")

or soup.select_one("div.answer[itemprop='acceptedAnswer']")

or soup.select_one("div[itemprop='acceptedAnswer']")

)

if accepted_block:

a_score = None

a_score_el = accepted_block.select_one("div.js-vote-count")

if a_score_el:

a_score = parse_int(a_score_el.get("data-value") or a_score_el.get_text(" ", strip=True))

# Main text content of the answer

body = accepted_block.select_one("div.s-prose") or accepted_block.select_one("div.post-text")

a_text = body.get_text("\n", strip=True) if body else ""

accepted = {

"score": a_score,

"text": a_text,

}

return {

"url": url,

"title": title,

"tags": tags,

"score": score,

"accepted_answer": accepted,

}

Step 4: Crawl + export (JSONL)

import json

def scrape_stackoverflow_tag(tag: str, pages: int = 2) -> list[dict]:

urls = crawl_tag(tag, pages=pages)

rows = []

for i, url in enumerate(urls, start=1):

html = fetch_html(url)

row = parse_question_page(html, url)

rows.append(row)

print("question", i, "/", len(urls), "title", (row.get("title") or "")[:60])

polite_sleep()

return rows

if __name__ == "__main__":

rows = scrape_stackoverflow_tag("python", pages=2)

with open("stackoverflow_python.jsonl", "w", encoding="utf-8") as f:

for r in rows:

f.write(json.dumps(r, ensure_ascii=False) + "\n")

print("wrote stackoverflow_python.jsonl", len(rows))

Responsible crawling tips (that actually matter)

- Crawl Newest and store the last-seen question id/time so you don’t re-fetch everything.

- Cache HTML responses to disk for debugging.

- Keep concurrency low (start with 1–2 workers).

- Add soft-block detection (429/403 spikes, HTML that looks like a challenge page).

Where ProxiesAPI fits (honestly)

Stack Overflow is generally scrapeable without proxies at small volumes.

But once you scale to:

- many tags

- many pages per tag

- deep question/answer extraction

…your request volume climbs quickly. ProxiesAPI helps keep the crawl stable by routing requests through proxies, reducing throttles and improving success rates (especially across many parallel jobs).

Stack Overflow is mostly server-rendered HTML, but at scale you’ll hit throttles and intermittent blocks. ProxiesAPI helps keep tag crawls stable as you paginate and fetch thousands of question pages.