Scrape Restaurant Data from TripAdvisor (Reviews, Ratings, and Locations)

TripAdvisor is one of the most useful “directory + reviews” datasets on the web: you can turn restaurant listings into lead lists, build market research dashboards, or monitor reputation over time.

In this tutorial we’ll build a real Python scraper that:

- starts from a city restaurant directory page

- extracts restaurant listing URLs

- visits each listing and extracts: name, rating, review count, price range (when present), cuisine tags (when present), address, locality/city

- exports JSON + CSV

- uses ProxiesAPI in the network layer (so scaling doesn’t mean re-architecting)

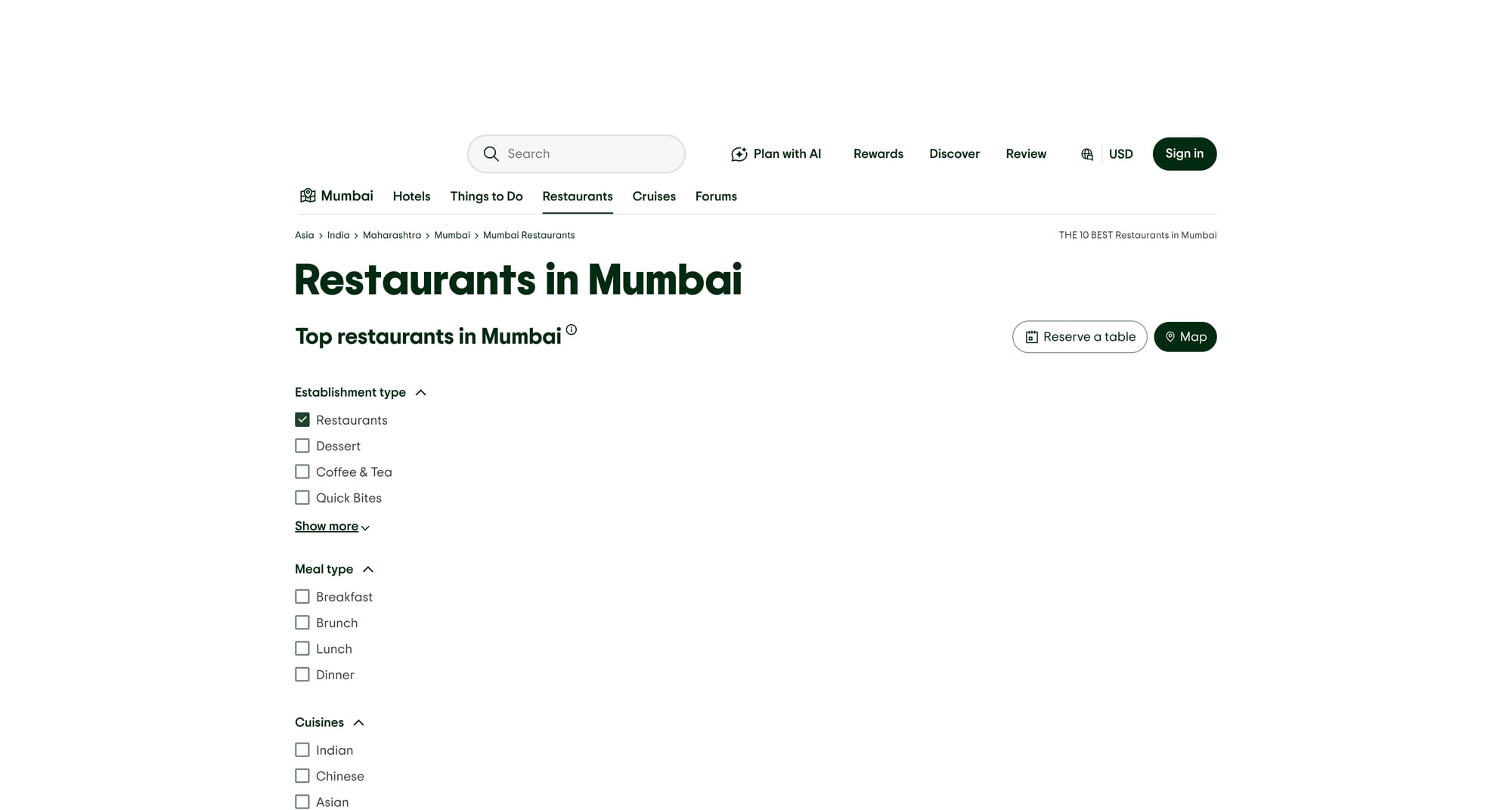

We’ll also include a screenshot of the page we’re parsing.

Directory sites can block quickly when you scale from 10 URLs to 10,000. ProxiesAPI fits cleanly into your fetch layer so retries and rotation are one small change — not a rewrite.

Important notes (before you scrape)

- TripAdvisor can be aggressive about bot traffic. HTML structure can change and some pages may be dynamically rendered or localized.

- Respect terms, robots, and local law. Use reasonable rates and only collect what you truly need.

- This guide is designed to be honest and practical: if a selector doesn’t exist on a specific page, we handle it gracefully.

What we’re scraping (URL patterns + page types)

TripAdvisor usually has:

- Directory pages (lists of restaurants for a location)

- Listing pages (details + reviews summary)

A typical “restaurants in a city” directory URL looks like:

https://www.tripadvisor.com/Restaurants-g304554-Mumbai_Maharashtra.html

A typical restaurant listing URL often includes something like Restaurant_Review-... in the path.

Because TripAdvisor runs many experiments, don’t overfit to a single city. Our crawler will:

- collect listing URLs from any anchors that look like restaurant listing links

- dedupe

- scrape detail pages with robust parsing

Setup

python -m venv .venv

source .venv/bin/activate

pip install requests beautifulsoup4 lxml pandas

We’ll use:

requestsfor HTTPBeautifulSoup(lxml)for parsingpandasfor quick CSV export (optional but convenient)

ProxiesAPI: a clean fetch layer

ProxiesAPI works by fetching the target URL through their endpoint:

http://api.proxiesapi.com/?auth_key=YOUR_KEY&url=https://example.com

We’ll wrap that in a small function so everything else stays normal requests + HTML.

import os

import time

import random

import urllib.parse

import requests

PROXIESAPI_KEY = os.environ.get("PROXIESAPI_KEY", "")

TIMEOUT = (10, 40) # connect, read

session = requests.Session()

def proxiesapi_url(target_url: str) -> str:

if not PROXIESAPI_KEY:

raise RuntimeError("Set PROXIESAPI_KEY in your environment")

return (

"http://api.proxiesapi.com/?auth_key="

+ urllib.parse.quote(PROXIESAPI_KEY, safe="")

+ "&url="

+ urllib.parse.quote(target_url, safe="")

)

def fetch(url: str, *, use_proxiesapi: bool = True, max_retries: int = 4) -> str:

"""Fetch HTML with basic retry/backoff.

ProxiesAPI is used by default. If you want to debug without it,

call fetch(url, use_proxiesapi=False).

"""

last_err = None

for attempt in range(1, max_retries + 1):

try:

final_url = proxiesapi_url(url) if use_proxiesapi else url

r = session.get(

final_url,

timeout=TIMEOUT,

headers={

# keep headers simple; ProxiesAPI can still pass them through

"User-Agent": (

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/123.0 Safari/537.36"

),

"Accept-Language": "en-US,en;q=0.9",

},

)

# ProxiesAPI returns the upstream HTML; you still get normal status codes.

r.raise_for_status()

html = r.text

if not html or len(html) < 2000:

raise RuntimeError(f"Suspiciously small HTML ({len(html)} bytes)")

return html

except Exception as e:

last_err = e

sleep_s = min(10, (2 ** (attempt - 1))) + random.random()

time.sleep(sleep_s)

raise RuntimeError(f"Fetch failed after {max_retries} attempts: {last_err}")

Step 1: extract restaurant listing URLs from a directory page

TripAdvisor directory pages contain a lot of links. We want restaurant detail pages.

A practical heuristic:

- keep

<a href="...">links where the URL contains"/Restaurant_Review" - normalize to absolute URLs

- dedupe

from bs4 import BeautifulSoup

from urllib.parse import urljoin

BASE = "https://www.tripadvisor.com"

def extract_restaurant_urls(directory_html: str) -> list[str]:

soup = BeautifulSoup(directory_html, "lxml")

urls = []

seen = set()

for a in soup.select("a[href]"):

href = a.get("href")

if not href:

continue

# TripAdvisor restaurant detail pages often include this token.

if "/Restaurant_Review" not in href:

continue

abs_url = urljoin(BASE, href)

# remove URL fragments

abs_url = abs_url.split("#")[0]

if abs_url in seen:

continue

seen.add(abs_url)

urls.append(abs_url)

return urls

Quick sanity check

start_url = "https://www.tripadvisor.com/Restaurants-g304554-Mumbai_Maharashtra.html"

html = fetch(start_url)

urls = extract_restaurant_urls(html)

print("found listing urls:", len(urls))

print(urls[:5])

If you get 0 URLs, open the page in your browser and inspect the HTML:

- the site may be serving a bot-interstitial

- the structure may be different for your locale

That’s exactly why you want the retryable fetch layer first.

Step 2: parse a restaurant listing page (name, rating, reviews, address)

TripAdvisor listing pages vary a bit, so we’ll use multiple selector fallbacks.

Typical things to look for:

- Name: usually in an

h1 - Rating: often exposed via

aria-labellike “4.5 of 5 bubbles” - Review count: text containing “reviews”

- Address: often near “Address” section, sometimes in a

<span>with address-like content

Here’s a resilient parser that:

- gets the

<title>too (useful for debugging) - extracts best-effort fields

import re

from bs4 import BeautifulSoup

def clean_text(s: str | None) -> str | None:

if not s:

return None

out = re.sub(r"\s+", " ", s).strip()

return out or None

def parse_float_from_text(text: str | None) -> float | None:

if not text:

return None

m = re.search(r"(\d+(?:\.\d+)?)", text)

return float(m.group(1)) if m else None

def parse_int_from_text(text: str | None) -> int | None:

if not text:

return None

m = re.search(r"(\d[\d,]*)", text)

return int(m.group(1).replace(",", "")) if m else None

def parse_restaurant(listing_html: str, url: str) -> dict:

soup = BeautifulSoup(listing_html, "lxml")

title = clean_text(soup.title.get_text(" ", strip=True) if soup.title else None)

# Name

name = None

h1 = soup.select_one("h1")

if h1:

name = clean_text(h1.get_text(" ", strip=True))

# Rating: look for aria-label with "of 5" / "bubbles"

rating = None

rating_node = soup.select_one('[aria-label*="of 5" i]') or soup.select_one('[aria-label*="bubbles" i]')

if rating_node:

rating = parse_float_from_text(rating_node.get("aria-label"))

# Review count: look for text like "1,234 reviews"

review_count = None

# Many pages have multiple occurrences; pick the largest plausible number

counts = []

for el in soup.select("span, a, div"):

t = el.get_text(" ", strip=True)

if not t:

continue

if "review" in t.lower():

n = parse_int_from_text(t)

if n is not None:

counts.append(n)

if counts:

review_count = max(counts)

# Cuisine tags / price range are nice-to-have

cuisines = []

for a in soup.select('a[href*="RestaurantSearch"], a[href*="FindRestaurants"], a[href*="Restaurants"], span'):

t = clean_text(a.get_text(" ", strip=True))

if not t:

continue

# avoid very long strings

if len(t) > 30:

continue

# common cuisine-ish signals

if t.lower() in {"open now", "closed now", "menu", "website"}:

continue

# heuristic: tags sometimes appear as small pills; keep a few

if any(ch.isalpha() for ch in t) and len(t.split()) <= 3:

cuisines.append(t)

cuisines = list(dict.fromkeys(cuisines))[:8]

# Address: heuristic — find something that looks like an address block

address = None

address_candidates = []

for el in soup.select("span, div"):

t = clean_text(el.get_text(" ", strip=True))

if not t:

continue

# crude filter: addresses often have commas and numbers

if "," in t and any(ch.isdigit() for ch in t) and 10 <= len(t) <= 140:

address_candidates.append(t)

if address_candidates:

# pick the shortest candidate (often the cleanest)

address = sorted(address_candidates, key=len)[0]

return {

"url": url,

"page_title": title,

"name": name,

"rating": rating,

"review_count": review_count,

"address": address,

"cuisines_guess": cuisines,

}

Step 3: crawl N listings and export JSON + CSV

Now we wire it all together.

import json

import pandas as pd

def scrape_city(start_url: str, limit: int = 25) -> list[dict]:

directory_html = fetch(start_url)

listing_urls = extract_restaurant_urls(directory_html)

out = []

for i, url in enumerate(listing_urls[:limit], start=1):

html = fetch(url)

data = parse_restaurant(html, url)

out.append(data)

print(f"[{i}/{min(limit, len(listing_urls))}]", data.get("name"), data.get("rating"), data.get("review_count"))

# be polite; directory sites hate bursts

time.sleep(1.0 + random.random())

return out

if __name__ == "__main__":

START = "https://www.tripadvisor.com/Restaurants-g304554-Mumbai_Maharashtra.html"

rows = scrape_city(START, limit=20)

with open("tripadvisor_restaurants.json", "w", encoding="utf-8") as f:

json.dump(rows, f, ensure_ascii=False, indent=2)

pd.DataFrame(rows).to_csv("tripadvisor_restaurants.csv", index=False)

print("saved tripadvisor_restaurants.json and tripadvisor_restaurants.csv", len(rows))

Output example (what you should see)

You should see a stream like:

[1/20] Foo Restaurant 4.5 1234

[2/20] Bar Kitchen 4.0 512

...

saved tripadvisor_restaurants.json and tripadvisor_restaurants.csv 20

Troubleshooting (the stuff that actually breaks)

1) “Suspiciously small HTML”

This usually means you got:

- an interstitial page

- a consent/geo page

- a bot-block response

Fixes:

- increase retry count

- add a longer delay between requests

- crawl fewer URLs per run and schedule incremental jobs

2) Selectors don’t match

TripAdvisor’s DOM changes. Don’t panic:

- print

page_titlefor 3 failing pages - inspect HTML for

h1and ratingaria-label - update the selector list (keep it modular)

3) Pagination / more URLs

Directory pages are paginated. Once you confirm your extract_restaurant_urls() works, add a “discover pages” step that follows links that look like “Next”.

A simple approach is:

- collect restaurant URLs from page 1

- find a

nextlink (a[aria-label*="Next"]etc.) - repeat for

pages=K

Where ProxiesAPI fits (no hype)

TripAdvisor is exactly the kind of target where scrapers get unstable as you scale.

ProxiesAPI doesn’t magically make every request succeed — but it gives you:

- a simple single-endpoint fetch model

- rotation/retry room when your own IP starts getting throttled

- an easy path from “works on my laptop” → “runs nightly”

If you keep your scraper architecture clean (fetch → parse → export), swapping in ProxiesAPI is a small change.

QA checklist

- Directory page returns enough listing URLs

- At least 5 listings parse a name + rating

- You export both JSON and CSV

- You’re rate-limiting (no bursts)

- You can re-run without duplicating if you add a simple “seen URL” store

Directory sites can block quickly when you scale from 10 URLs to 10,000. ProxiesAPI fits cleanly into your fetch layer so retries and rotation are one small change — not a rewrite.